The GEO Attribution Crisis: How Flawed AI Tracking Is Breaking SEO Conversion Models in 2026

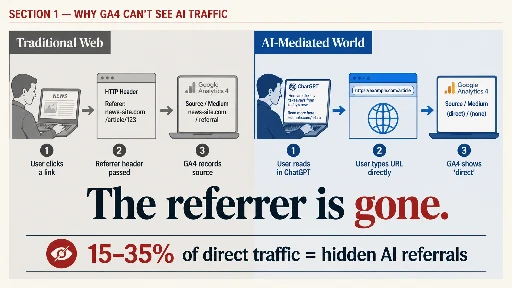

GA4 is misclassifying 15–35% of AI-driven traffic as direct. Last-touch attribution under-credits content. Here's the full breakdown of the 2026 GEO attribution crisis and the 5-layer fix practitioners need now.

The GEO Attribution Crisis: How Flawed AI Tracking Is Breaking SEO Conversion Models in 2026

TL;DR: GA4 is misclassifying between 15% and 35% of AI-driven referral traffic as "direct," while last-touch attribution systematically under-credits the content that earns AI citations. Marketing teams are cutting content budgets based on broken data, and most haven't noticed yet.

What you'll learn:

- Why GA4's attribution model was never built for AI-intermediated traffic and how to fix it with a custom regex channel group

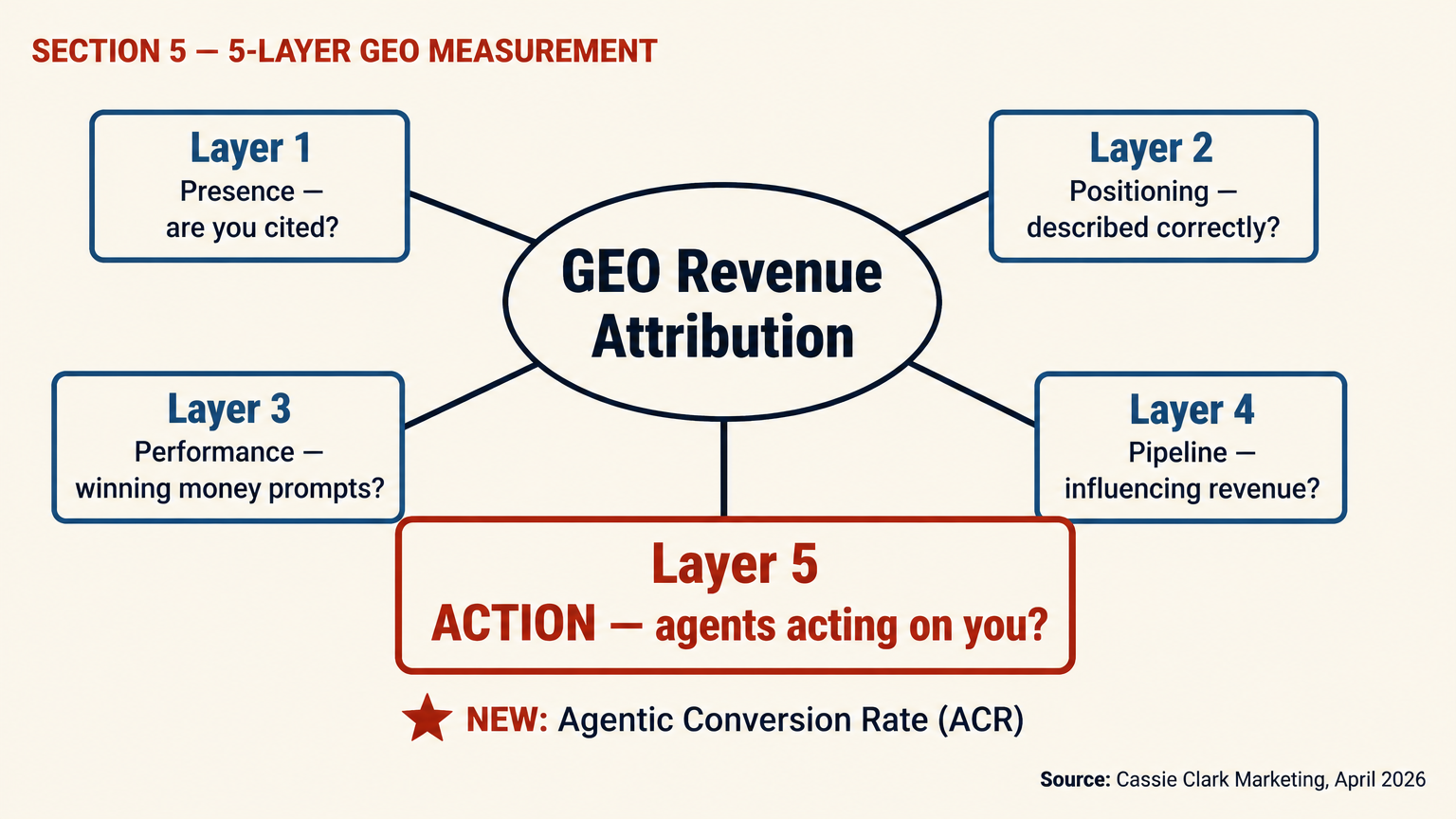

- The 5-layer GEO measurement plan (Presence, Positioning, Performance, Pipeline, Action) including the new Agentic Conversion Rate metric almost nobody tracks

- Concrete steps to identify hidden AI traffic in your current data and build a measurement stack that survives the zero-click world

A client came to me in March with a familiar complaint: paid search costs were up 22% year-over-year, conversion volume was flat, and the internal team wanted to cut content spend. I ran an attribution audit before touching the budget. Their direct traffic had grown 41% year-over-year. Their branded organic search was up 28%. No new paid brand campaigns explained it. Manual queries across ChatGPT, Perplexity, and Gemini showed their brand cited consistently for "best mid-market professional services consultants." Their content was earning AI citations at scale. The attribution model was hiding every single one of those conversions. Cutting content would have been exactly the wrong call.

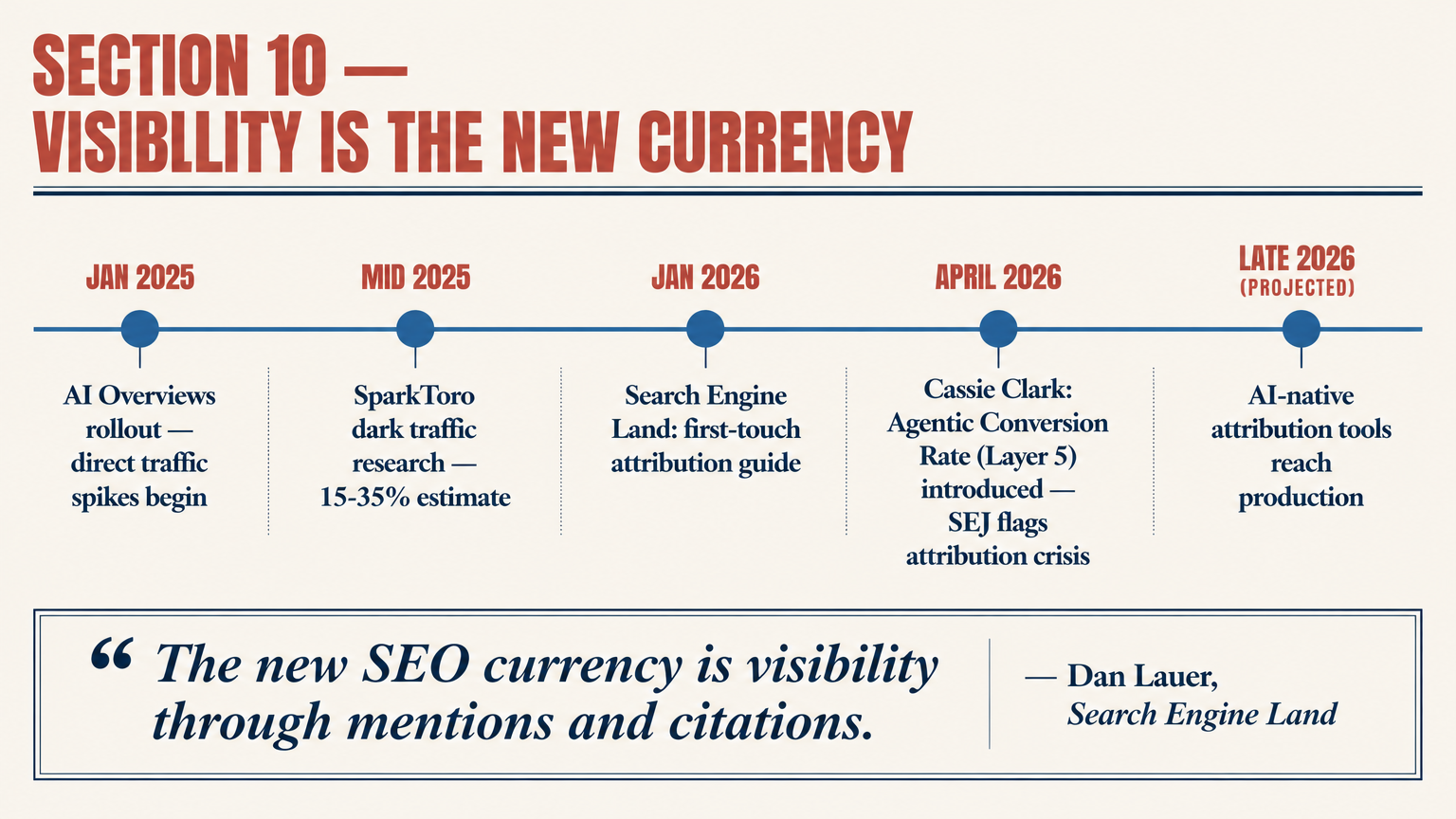

This isn't an isolated case. Search Engine Journal reported on April 29, 2026 that flawed AI tracking methods are skewing attribution models across the industry, creating false signals that push budget decisions in the wrong direction. (Source: Search Engine Journal, April 29, 2026.) The problem runs deeper than most teams realize, and the fix requires more than adding a GA4 channel group.

For a foundation on how AI search visibility works before measurement, see our guide on AI search visibility and SEO in 2026.

Why GA4 Can't See AI Traffic

GA4 was built for a world where people click links. The attribution logic, whether last-touch, first-touch, or data-driven, assumes a human makes a deliberate trip from point A to point B, and a referrer header documents the journey. AI search broke that assumption at a structural level.

When a user reads a ChatGPT answer that cites your brand, then types your URL into their browser, GA4 sees a direct visit. No referrer. No source. No medium. The AI's role in that discovery is invisible. Perplexity has started passing some referrer parameters for publisher partners, but ChatGPT, Gemini, Google AI Overviews, and Claude pass nothing systematic. The gap isn't a bug waiting for a patch. It's structural, and it won't be fixed by a platform update this quarter. (Source: Codedesign, 2026.)

There's a second, subtler problem: branded search inflation. When an AI mentions your brand, a portion of users search your brand name on Google rather than typing the URL directly. That traffic registers as branded organic search, which looks like SEO performance. It's actually AI-assisted discovery wearing SEO's clothes. The two require completely different optimization responses, and conflating them sends teams down the wrong path. (Source: Codedesign, 2026.)

Key takeaway

GA4's structural blind spot for AI-intermediated traffic isn't fixable with a setting toggle. It requires a deliberate multi-signal measurement rebuild. Teams that skip this are making Q2 and Q3 budget decisions on data that's missing a growing chunk of their top-of-funnel influence.

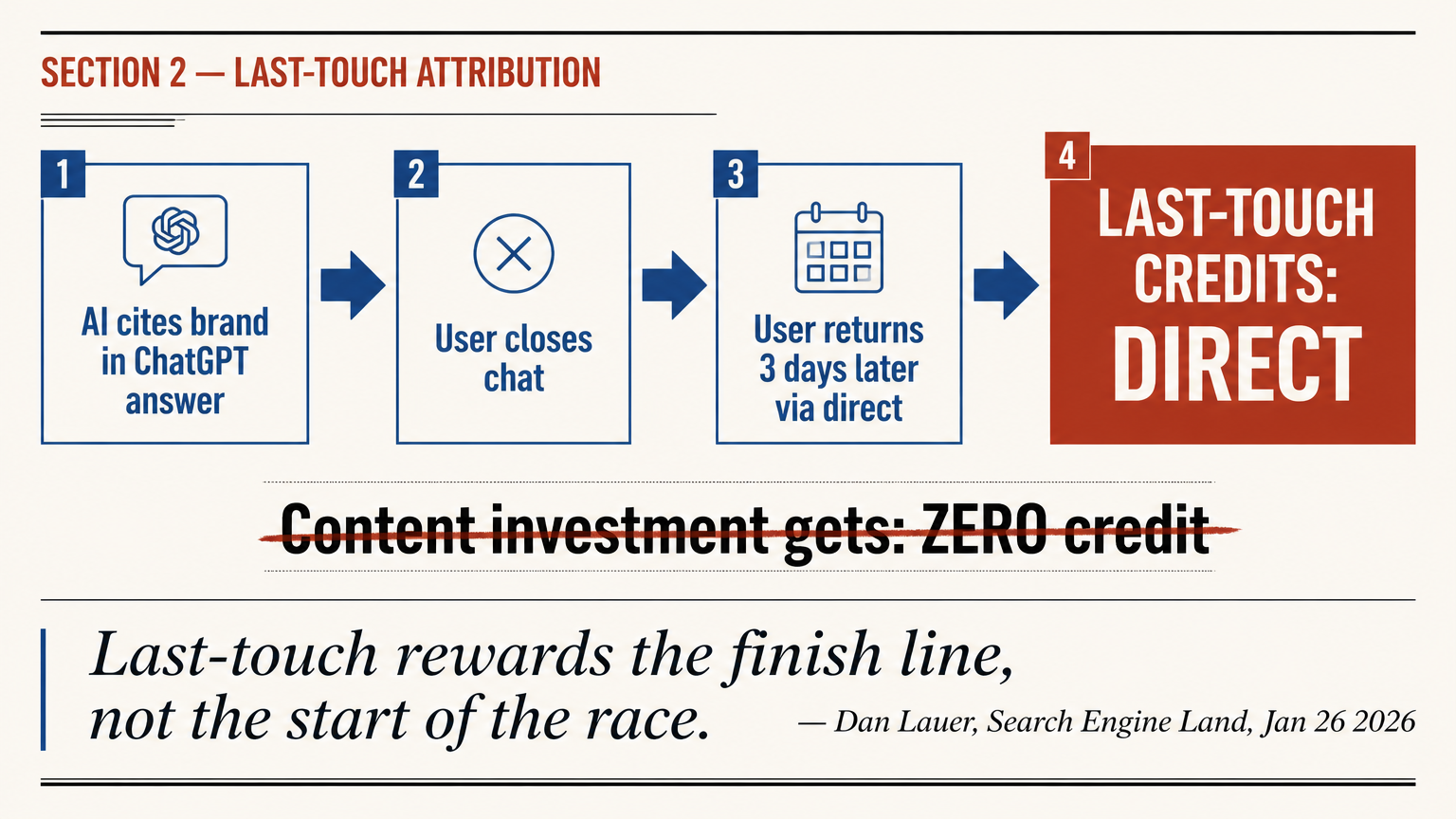

Last-Touch Attribution Is the Wrong Model for 2026

Last-touch attribution made sense when the buyer's path was: Google search, click, landing page, form fill. That path still exists. But the AI-mediated path looks like this: ask ChatGPT a research question, read a synthesized answer that mentions three vendors, close the chat, come back three days later and type one of those vendor URLs directly. Last-touch credits "direct." The content asset that earned the AI citation gets nothing.

Dan Lauer wrote about this in Search Engine Land on January 26, 2026: "We have not been accurately measuring organic search. Many organizations still rely on last-touch attribution, which measures the end of the customer journey, not the start." He makes the point that organic search now introduces the category, frames the problem, and builds brand credibility before the buyer visits the site, watches a video, or asks a follow-up question. Without first-touch visibility, that work is invisible. (Source: Search Engine Land, January 26, 2026.)

"Last-touch attribution rewards the finish line, not the start of the race. It collapses in an AI-first, zero-click world, especially for organic search."

Dan Lauer, Search Engine Land, January 26, 2026

The practical consequence: CFOs see organic traffic down 20–25% year-over-year in their GA4 reports and ask whether SEO is still worth funding. The honest answer is that organic is almost certainly performing better than the report shows. The measurement model is the problem, not the channel. I've had this exact conversation with three different heads of marketing this quarter. Every time, a first-touch analysis changed the conclusion.

Key takeaway

Switching from last-touch to first-touch attribution for SEO reporting is the single fastest way to restore accuracy. It won't fix everything, but it will immediately show leadership how organic search seeds the funnel rather than just closing it.

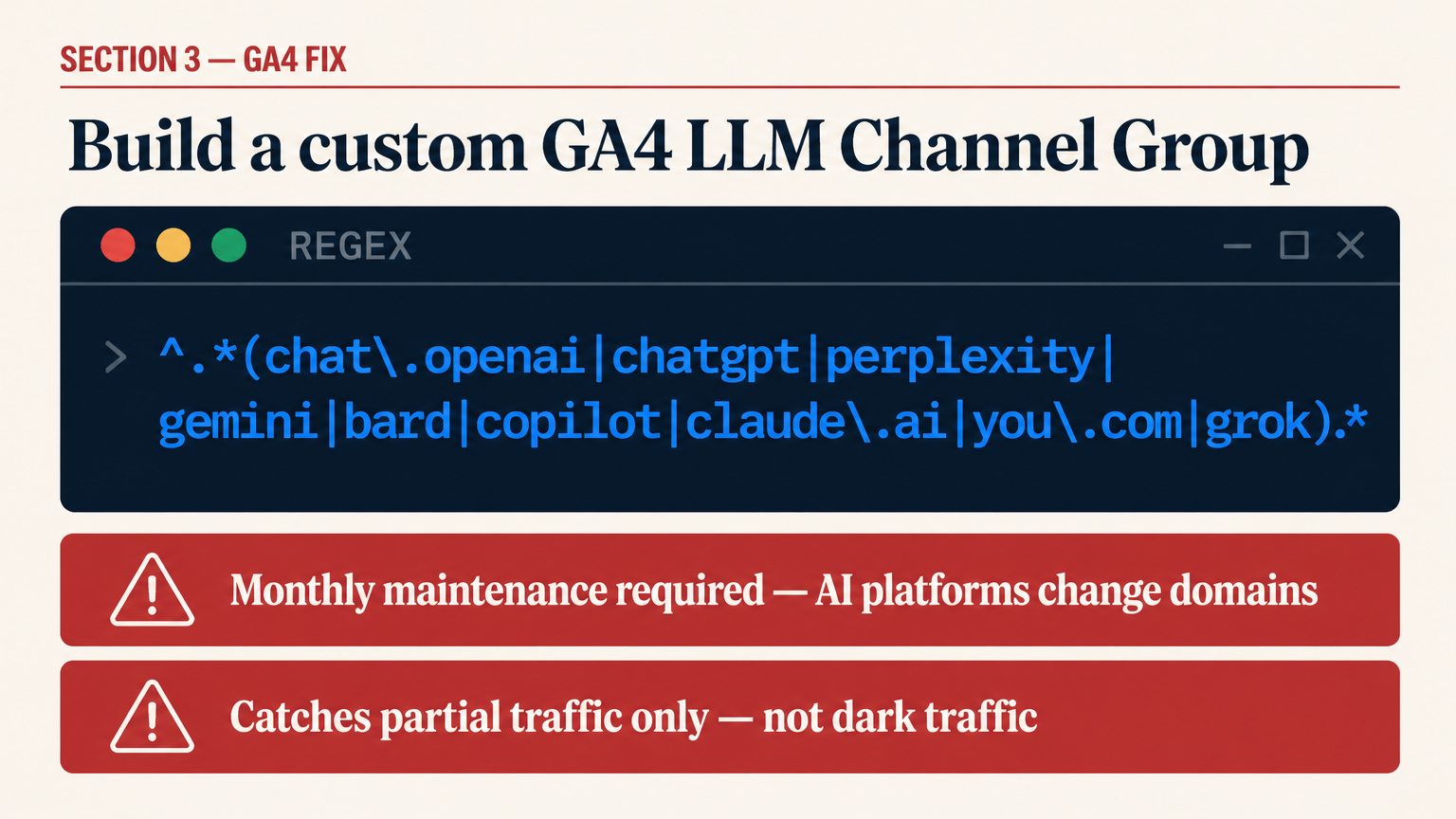

The GA4 Fix: Custom Channel Group for LLM Traffic

The most actionable short-term fix is a custom GA4 channel group that catches AI referrals before they fall into the generic buckets. Here's exactly how to build it.

In GA4, go to Admin → Data Display → Channel Groups → Create new channel group. Add a new channel called "AI / LLM Referral" and place it above all default channels so GA4 evaluates it first. The session source matching rule uses a regex pattern against the referrer domain:

^.*(chat\.openai|chatgpt|perplexity|gemini|bard|copilot|claude\.ai|you\.com|phind|poe\.com|meta\.ai|bing.*chat|grok).*$Two important notes. First, this regex needs monthly maintenance. AI platforms change their domains and subdomain structures regularly. ChatGPT added canvas.apps.openai.com in early 2026; Perplexity rolled out new subdomains for its agent features. If you set this up once and walk away, it will drift. (Source: Airfleet, 2026.)

Second, this regex only catches the AI traffic that does pass referrer parameters. The structural dark traffic problem, the visitors who arrive with no referrer at all after reading an AI answer, requires the complementary approaches below. This channel group is a floor estimate, not a complete picture.

For teams running Adobe Analytics or a custom CDP, the same logic applies: build a dedicated segment with the same regex pattern and apply it to your traffic source dimension. The mechanics differ; the underlying fix is identical.

Key takeaway

A custom GA4 LLM channel group built with up-to-date regex gives you a measurable floor for AI referral traffic. Treat it as one signal in a multi-input model, not a definitive number.

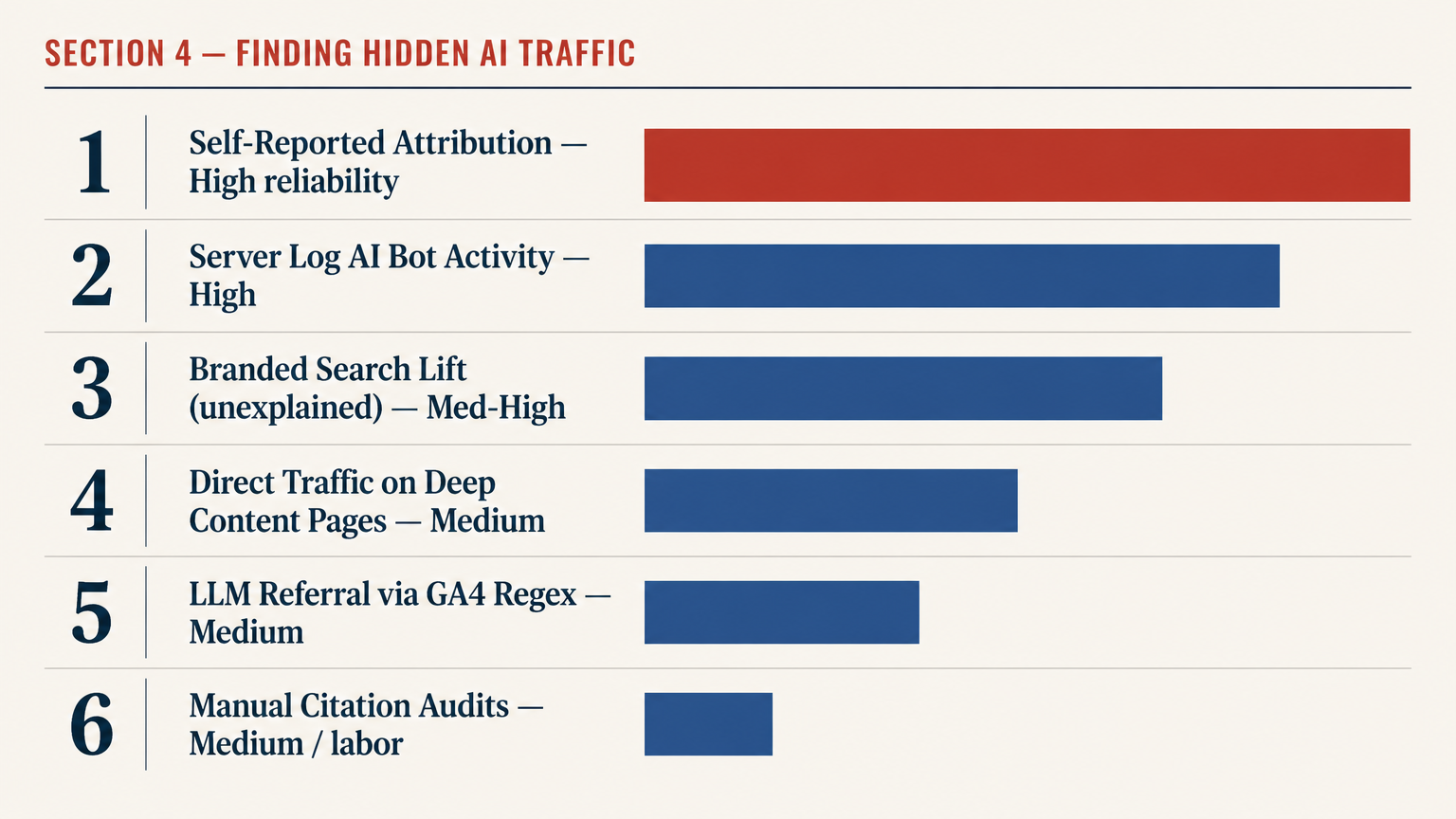

Finding the Hidden AI Traffic Already in Your Data

Before you rebuild your measurement stack, audit what's already there. Most teams have AI signal hiding in plain sight.

Start with your direct traffic segment. Break it down by landing page. AI citations drop users onto specific, deep content pages, not your homepage. If your direct traffic is spiking on a blog post about "best enterprise CRM platforms" rather than on your homepage or pricing page, that's a pattern worth investigating. AI-primed visitors also show shorter time-to-convert because they arrive already informed. Segment direct by landing page, then by conversion latency. The AI-referred cohort will show a measurably different behavior pattern. (Source: Codedesign, 2026.)

Second, look at branded organic search growth. Pull your branded keyword volume from Google Search Console for the past 12 months and overlay it against any paid brand campaigns you ran in the same period. If branded search grew without a corresponding paid push, something else is feeding discovery. In 2026, that something is almost always AI citation. Quantify the unexplained delta and put a conservative revenue estimate on it using your branded organic conversion rate.

Third, add a self-reported attribution field to your highest-intent forms. The question "How did you first hear about us?" with options including "ChatGPT / AI assistant" and "AI search (Perplexity, Gemini, etc.)" costs nothing to implement and produces data no analytics platform can generate automatically. Some teams go further and ask "What did you search or ask to find us?" in a free-text field. The responses are occasionally hilarious, consistently useful, and occasionally include the exact ChatGPT prompt a buyer used to discover you. (Source: Cassie Clark Marketing, 2026.)

| Signal | Where to Find It | What It Shows | Reliability |

|---|---|---|---|

| LLM referral traffic (with referrer) | GA4 custom channel group (regex) | AI platforms that pass referrer headers | Medium — misses dark traffic |

| Direct traffic on deep content pages | GA4 → Sessions → Landing page + direct | Likely AI-primed visitors | Medium — directional only |

| Branded search lift (unexplained) | GSC + paid brand spend overlay | AI-assisted brand discovery | Medium-high — strong proxy |

| Self-reported attribution | Form field "How did you hear about us?" | Buyer's conscious memory of AI discovery | High — first-party |

| Server log AI bot activity | Server logs filtered by AI crawler user agents | Which pages AI bots are crawling and caching | High — direct signal |

| Manual AI citation audits | Weekly queries in ChatGPT, Perplexity, Gemini | Brand presence, framing, and competitor comparison | Medium — labor-intensive but accurate |

The 5-Layer GEO Measurement Plan

Cassie Clark published the most practical GEO measurement breakdown I've seen this year. Her 5-layer plan covers every stage from raw visibility through to revenue, and adds a fifth layer in April 2026 that most teams haven't thought about yet. Here's the structure with my annotations. (Source: Cassie Clark Marketing, April 2026.)

1Presence — Are you showing up at all?

Citation presence rate (what percentage of tracked prompts cite your brand with a source link), mention rate (named without a link), platform coverage across ChatGPT, Perplexity, Gemini, Google AI Overviews, and Claude, and first-mention position. Presence is the entry ticket, not the win.

2Positioning — Are you described correctly?

Use-case accuracy (does the AI name the right audience and problem?), sentiment, primary recommendation rate, and message alignment. A mention with wrong framing can hurt more than no mention. If ChatGPT consistently calls your B2B platform "a cheaper alternative to Competitor X," that's not a GEO win.

3Performance — Are you winning the prompts that matter?

AI share of voice versus named competitors across your tracked prompt set, recommendation displacement rate, and brand comparison win rate when users ask "X vs Y" questions. Share of voice maps the competitive field. Citation counts in isolation are vanity metrics.

4Pipeline — Is visibility influencing revenue?

AI-assisted referral traffic (floor estimate), branded search lift, direct traffic lift, self-reported attribution from forms, and pipeline that touches content assets driving GEO citations. This is the hardest layer to track precisely, but it's the one that funds your GEO budget. Build a multi-signal model; don't wait for a single perfect number.

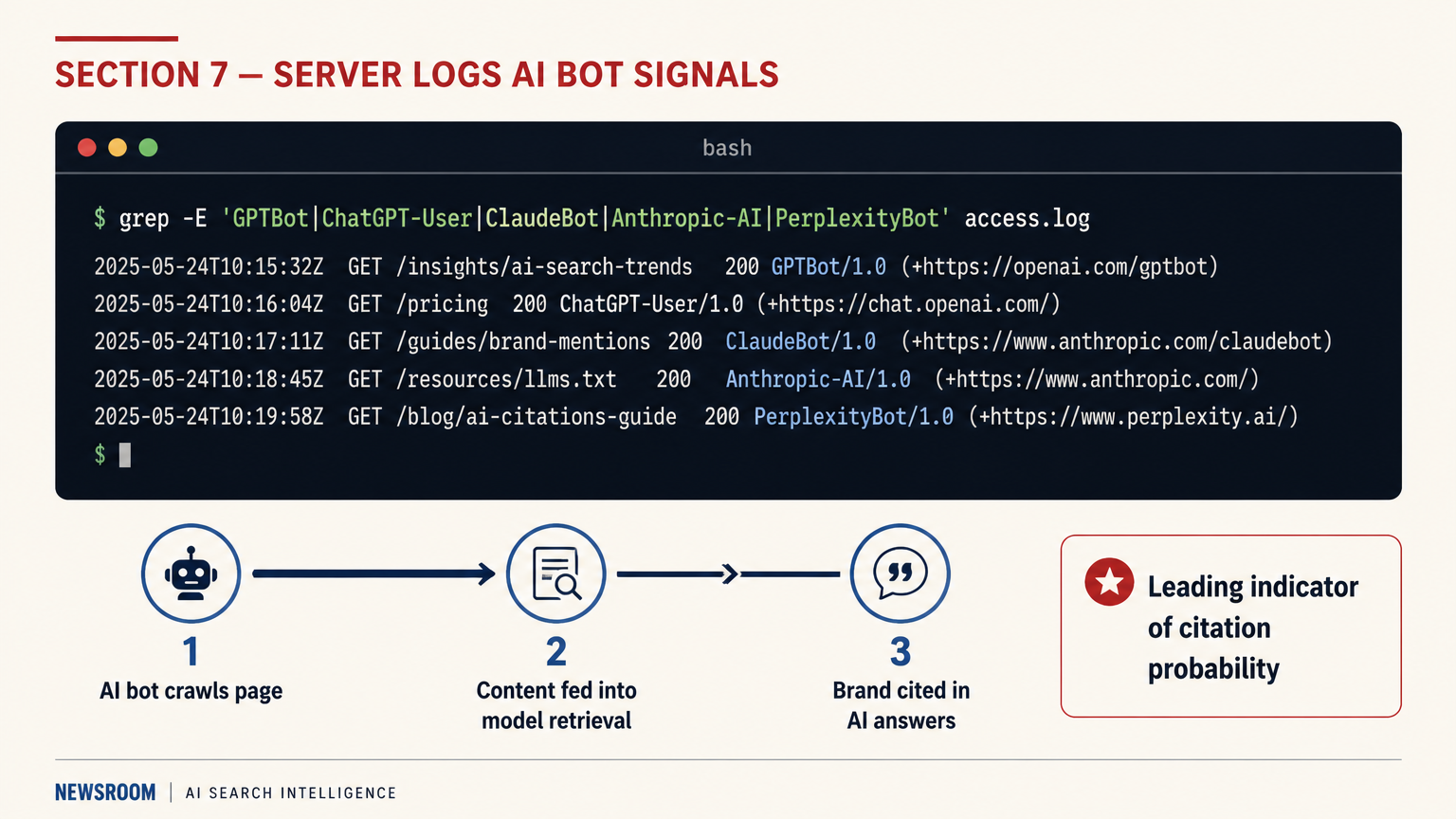

5Action — Are agents acting on your presence?

The new layer. As AI agents (Claude in Chrome, ChatGPT agents, Perplexity Comet) execute tasks on behalf of buyers, being cited in an answer is no longer enough. The agent has to choose to act on that citation. Agentic Conversion Rate measures the percentage of agentic interactions that result in a meaningful action involving your brand: a form fill, a pricing page scrape, a demo booking, or inclusion in an RFP comparison doc. Almost no team is tracking this yet.

"Buyers are no longer just reading AI answers — they're handing tasks off to AI agents and letting those agents do things on their behalf. Which means a brand can be cited beautifully in an AI answer and still lose the deal because the agent didn't choose to act on that citation."

Cassie Clark, AI Search Expert, CassieClarkMarketing.com, April 2026

Layer 5 is worth sitting with. Right now, it's almost unmeasurable at scale outside of enterprise tools. But the brands that start monitoring server logs for AI agent user agents (GPTBot, ClaudeBot, PerplexityBot, Anthropic-AI) and cross-referencing against content page depth will be ahead of the curve when agentic attribution tooling matures later in 2026.

Key takeaway

Most teams are stuck at Layer 1 or 2, measuring citation counts. The revenue conversation happens at Layers 4 and 5. Build toward pipeline attribution, and start logging agentic activity in your server logs now even if the analysis isn't there yet.

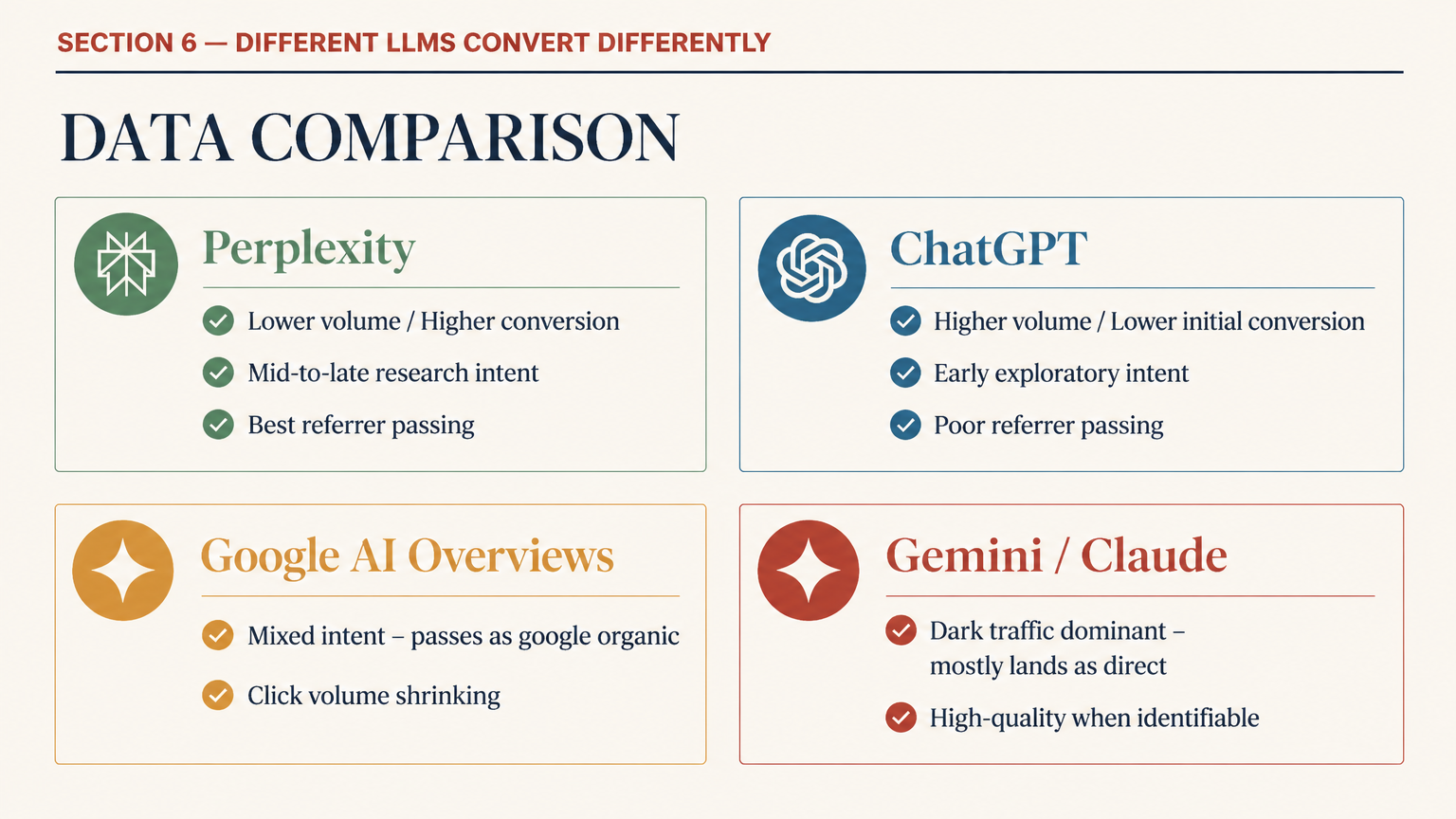

Different LLMs Drive Different Conversion Rates

Not all AI referral traffic converts at the same rate. This is the finding that most GEO guides skip over entirely, and it has direct implications for which platforms you should prioritize in your citation strategy.

Search Engine Journal reported on April 29, 2026 that data-driven GEO efforts should be segmented by LLM source rather than treating "AI traffic" as a monolithic category. (Source: Search Engine Journal, April 29, 2026.) The behavioral patterns differ by platform in ways that map to different buyer intent levels. Perplexity users tend to be deeper into research mode; ChatGPT users are more conversational and earlier in their process; Google AI Overviews users are the most intent-mixed of all, ranging from pure informational to transactional.

The practical implication: track your AI-referred traffic by source platform when you can, and segment conversion rates accordingly. If Perplexity sends 30% fewer visitors than ChatGPT but converts at 3x the rate, your content optimization effort should weight Perplexity citations more heavily than raw traffic share suggests. This is a counterintuitive finding that only appears when you stop treating AI referrals as a single bucket.

| AI Platform | Referrer Header Reliability | Typical User Intent Stage | Conversion Profile |

|---|---|---|---|

| Perplexity | Better than average (publisher partnerships) | Mid-to-late research | Lower volume, higher conversion rate |

| ChatGPT (web) | Inconsistent — no systematic referrer passing | Early research / exploratory | Higher volume, lower initial conversion |

| Google AI Overviews | Partial — passes as google.com organic | Mixed: informational to transactional | Traffic drop but often higher intent on clicks that do happen |

| Gemini (standalone) | Poor — mostly lands as direct | Mid-research | Hard to isolate; blends into direct bucket |

| Claude (Anthropic) | Poor — no referrer passing in chat mode | Technical / research-heavy | High-quality visitors when identifiable; converts well on technical content |

Server Logs: The Attribution Signal Everyone Ignores

Your server logs are the only place where AI bot activity shows up directly, before any analytics platform touches the data. This is where you can see which pages GPTBot, ClaudeBot, PerplexityBot, and Anthropic-AI are actually crawling, at what frequency, and whether they're successfully retrieving your content or hitting errors.

The crawl activity in your logs is a leading indicator of citation probability. If ClaudeBot is crawling your pricing page monthly but your comparison guide weekly, that's a signal about which content is being fed into Claude's training and retrieval systems. It's not a guaranteed citation predictor, but it's better directional data than guessing. (Source: Airfleet GEO tracking, 2026.)

Here's the minimal server log filter to start with:

# Filter AI bot activity from access logs

grep -E "(GPTBot|ChatGPT-User|ClaudeBot|Anthropic-AI|PerplexityBot|GoogleOther|Bytespider|cohere-ai)" access.log \

| awk '{print $7, $9}' \

| sort | uniq -c | sort -rn \

| head -50This pulls the top 50 most-crawled URLs by AI bots. Run it monthly, track changes, and correlate against your citation audit results. Pages with high AI bot crawl frequency and zero citations in your manual audits are either being crawled but not cited (a content quality or authority issue) or being cited into dark traffic you can't trace yet.

Countering the "GEO Is Just SEO" Argument

Kristine Schachinger posted on LinkedIn on April 29, 2026, arguing that GEO, AEO, LLM optimization, and every other new acronym are all just SEO rebranded, and that the industry is overcomplicating what's still fundamentally the same discipline: create credible, authoritative content and earn citations. (Source: LinkedIn, Kristine Schachinger, April 29, 2026.)

She's partially right, and I'll say it plainly: the content quality principles haven't changed. E-E-A-T matters for AI citations for exactly the same reason it matters for Google rankings. Clear, accurate, attributed, expert-authored content gets cited by both. The practitioners selling "GEO" as a wholly new discipline are often overselling.

Where the argument falls short: attribution tracking is genuinely different. The measurement model for "did this piece of content drive revenue" doesn't work the same way when the distribution channel is an AI model that passes no referrer, influences a buyer who arrives three days later as direct, and whose agentic successor might fill out your demo form autonomously without a human ever reading your page. The optimization input (create great content) is similar. The measurement output (track what it produces) requires an entirely different stack. Conflating the two leads to SEO managers who are optimizing their content correctly but reporting on it incorrectly, which loses them budget.

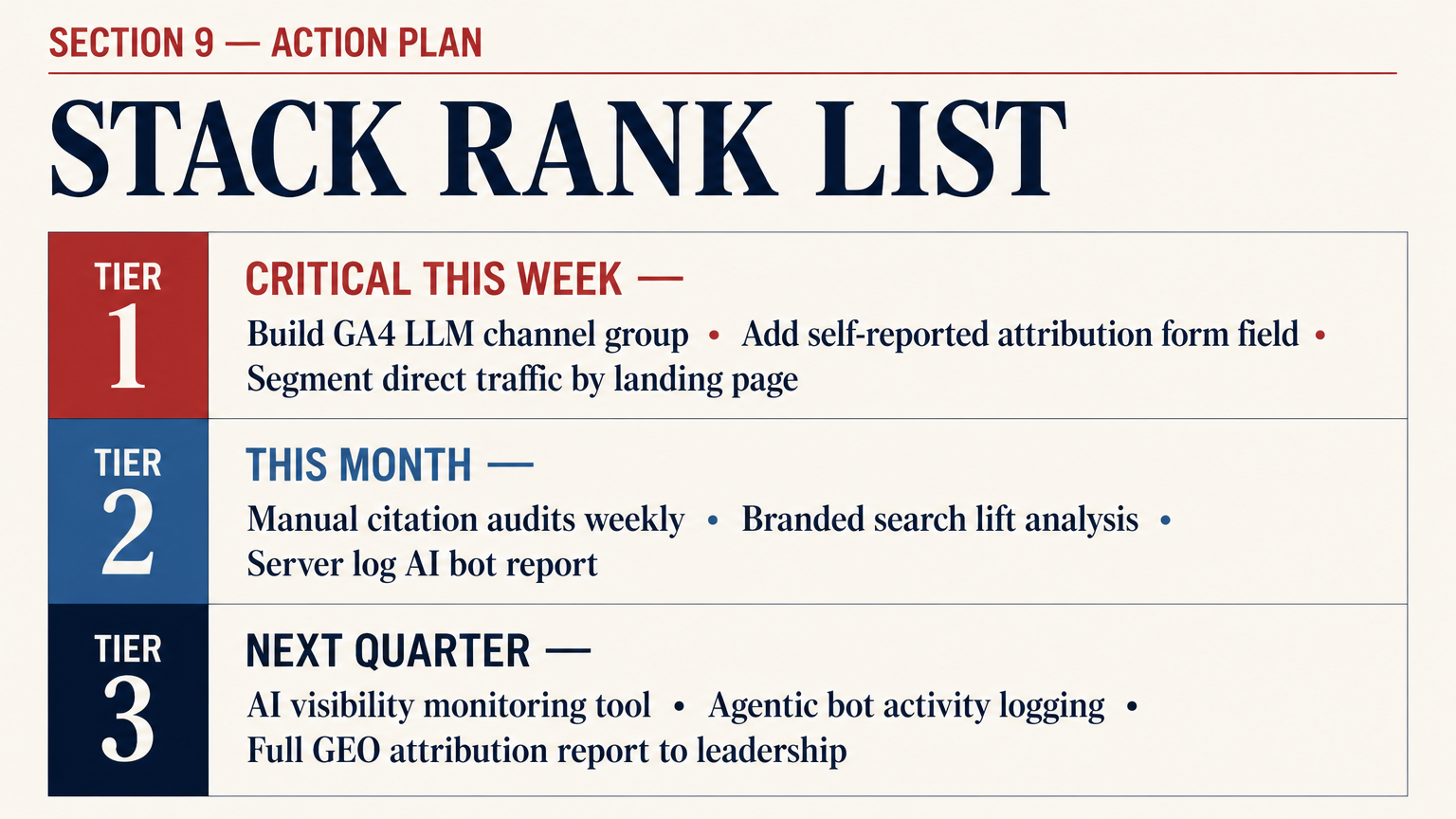

Action Plan: Fix Your GEO Attribution This Month

The measurement problem is solvable. Here's a prioritized action plan based on effort, cost, and speed of insight.

Critical (do this week)

- Build the custom GA4 LLM channel group with the regex filter above. Place it first in evaluation order.

- Add a self-reported attribution field to your highest-intent form ("How did you first hear about us?" with AI options).

- Pull your direct traffic by landing page for the past 6 months. Flag any deep content pages with unexplained spikes.

- Enable first-touch attribution in GA4 (Admin → Attribution → Reporting Attribution Model → First Click) and run it in parallel with last-touch for 30 days.

Important (do this month)

- Set up a manual AI citation audit cadence: 10 high-intent "money prompts" for your category, queried weekly across ChatGPT, Perplexity, Gemini, and Claude. Track presence, position, and framing.

- Pull branded search volume from GSC and overlay against paid brand spend. Quantify the unexplained delta.

- Filter server logs for AI bot user agents. Build a monthly crawl activity report.

- Create a Layer 4 pipeline report: AI-referred sessions, branded search lift, self-reported attribution, content pages influencing pipeline deals.

Next quarter

- Deploy a dedicated AI visibility monitoring tool (Waikay, BrandMentions AI tracking, or similar) to automate your citation audits at scale.

- Start logging and tagging AI agent bot activity separately from standard AI crawler activity in your server logs.

- Build an Agentic Conversion Rate proxy: track which of your pages are being crawled by AI agents (not just trainers) and correlate against pipeline stage of accounts that visited those pages.

- Present a full GEO attribution report to leadership: first-touch vs. last-touch delta, AI-referred traffic floor, branded search lift, pipeline influenced. Make the invisible visible before the next budget cycle.

Visibility Is the New Currency. Traffic Is the Old One.

The underlying shift here is about what SEO is actually producing. For a long time, the output was traffic. Clicks. Sessions. GA4 rows. That output is measurable, auditable, and easy to put on a slide. AI search is changing the output to visibility: brand mentions in AI answers, citations without clicks, impressions that never generate a session ID.

Dan Lauer put it well in Search Engine Land: "The new SEO currency in 2026 isn't keywords, impressions, or clicks — it's visibility through mentions and citations. If AI systems select which brands to cite, organic visibility becomes a prerequisite for consideration, not just traffic." (Source: Search Engine Land, January 26, 2026.)

This is a harder sell to a CFO than "organic traffic was up 15%." But it's accurate, and the teams that learn to make this argument with real pipeline data will be the ones still running funded SEO programs in 2027. The teams that don't will watch their budgets drain toward paid while their content quietly earns AI citations that nobody can see.

Fix the measurement first. The content strategy follows from what the measurement reveals.

FAQ

How do I track ChatGPT referral traffic in GA4?

ChatGPT does not systematically pass referrer headers, so most ChatGPT-referred visits land as direct traffic in GA4. The partial fix is a custom channel group using a regex filter on session source (chat.openai.com, chatgpt.com). This catches traffic where ChatGPT does pass the referrer, but misses the majority that doesn't. Supplement with self-reported attribution on your forms and segment direct traffic by landing page to find AI-primed visitors behaviorally.

Why is my direct traffic spiking even though I haven't changed anything?

If branded search is also growing and you haven't run new paid brand campaigns, the most likely explanation is AI citation activity. AI-mentioned brands see users type URLs directly or search brand names rather than clicking links in the AI answer. Run a manual citation audit across ChatGPT, Perplexity, and Gemini for your brand name and key product terms to confirm.

What is GEO attribution and why does it matter for SEO budgets?

GEO attribution is the process of measuring which revenue and pipeline activity was influenced by your brand appearing in AI-generated search answers. It matters for budgets because standard last-touch attribution in GA4 cannot see AI's role in the buyer journey. Teams that don't build GEO attribution models systematically under-credit content investment and over-credit paid search, which leads to wrong budget cuts.

What is Agentic Conversion Rate (ACR)?

Agentic Conversion Rate, coined by Cassie Clark in April 2026, measures the percentage of AI agent interactions involving your brand that result in a meaningful action: a form fill, a demo booking, a pricing page scrape, or inclusion in an RFP document. As AI agents (Claude in Chrome, ChatGPT Agents, Perplexity Comet) execute buying tasks on behalf of humans, being cited in an AI answer is no longer sufficient. The agent has to choose to act on that citation. ACR tracks that choice rate.

Does last-touch attribution still have any value in 2026?

Yes, for closed-loop campaign measurement where you control all touchpoints (email sequences, paid retargeting, etc.) and the traffic has clean UTM parameters. For organic and AI-influenced channels, last-touch is actively misleading. Run first-touch and multi-touch models in parallel for organic performance reporting.

Which AI platforms pass referrer data reliably?

Perplexity is the most reliable; they have publisher partnership programs that include referrer tracking. Google AI Overviews traffic passes as google.com organic search, which is captured by standard GA4 channels but not distinguishable from regular organic. ChatGPT, Gemini standalone, and Claude pass referrer data inconsistently or not at all. The coverage gap is structural, not a bug.

How often should I run manual AI citation audits?

Weekly for your top 10 money prompts, monthly for a wider set of 30–50 prompts. The cadence matters because AI model outputs change as models are updated, fine-tuned, or fed new retrieval data. A citation you had in January may be gone by April, or replaced with a competitor. Monthly-only audits miss changes fast enough to matter for content strategy decisions.

Is GEO a separate discipline from SEO or the same thing?

The content optimization principles overlap heavily: authoritative, accurate, well-structured, expert-attributed content earns both Google rankings and AI citations. The measurement layer is genuinely different. GA4, GSC, and last-touch attribution were not built for AI-mediated discovery, and the fixes require deliberate new infrastructure. Think of GEO measurement as a new wing added to the SEO house, not a replacement for the whole building.