Build an AI Search Performance Dashboard in Claude in 15 Minutes — SE Ranking MCP + Live Artifacts Recipe

Oleksii Khoroshun's step-by-step recipe for building a live AI search performance dashboard inside Claude using SE Ranking MCP and Live Artifacts — tracking ChatGPT, Perplexity, and Gemini citations in real time.

The Zero-Click Reality: Why AI Search Visibility Matters in 2026

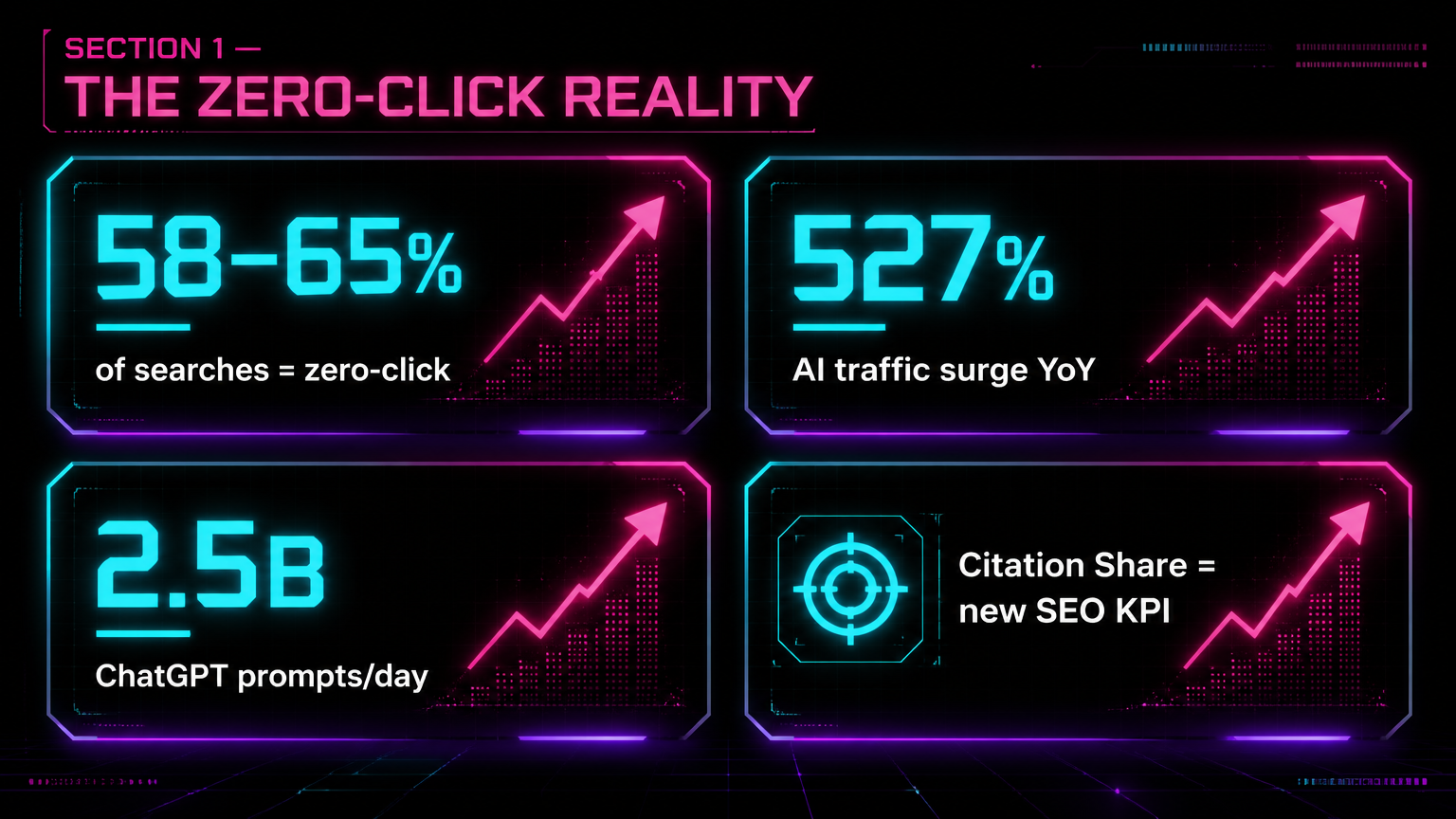

The search landscape has fundamentally shifted. As of early 2026, 58% to 65% of all searches result in a zero-click outcome, meaning users receive their answers directly from AI interfaces without ever navigating to a publisher's website. AI-referred sessions jumped 527% year-over-year in early 2025 (Source: Previsible's 2025 AI Traffic Report), and ChatGPT now processes 2.5 billion prompts per day, making it the dominant player in the global AI traffic market.

For modern SEO practitioners, optimizing for ten blue links is no longer sufficient. The discipline has evolved into Generative Engine Optimization (GEO), where the primary objective is to secure citations inside synthesized answers generated by Large Language Models (LLMs). As Kamil Rextin of 42 Agency notes, "Claude Code is for any marketer who needs to build an engine. It's not just for coding; it's for engineering distribution." The new standard of success is defined by "Citation Share" — if an AI agent summarizes your industry but fails to cite your brand, your effective SEO value is zero.

What Claude Live Artifacts Are and How They Work

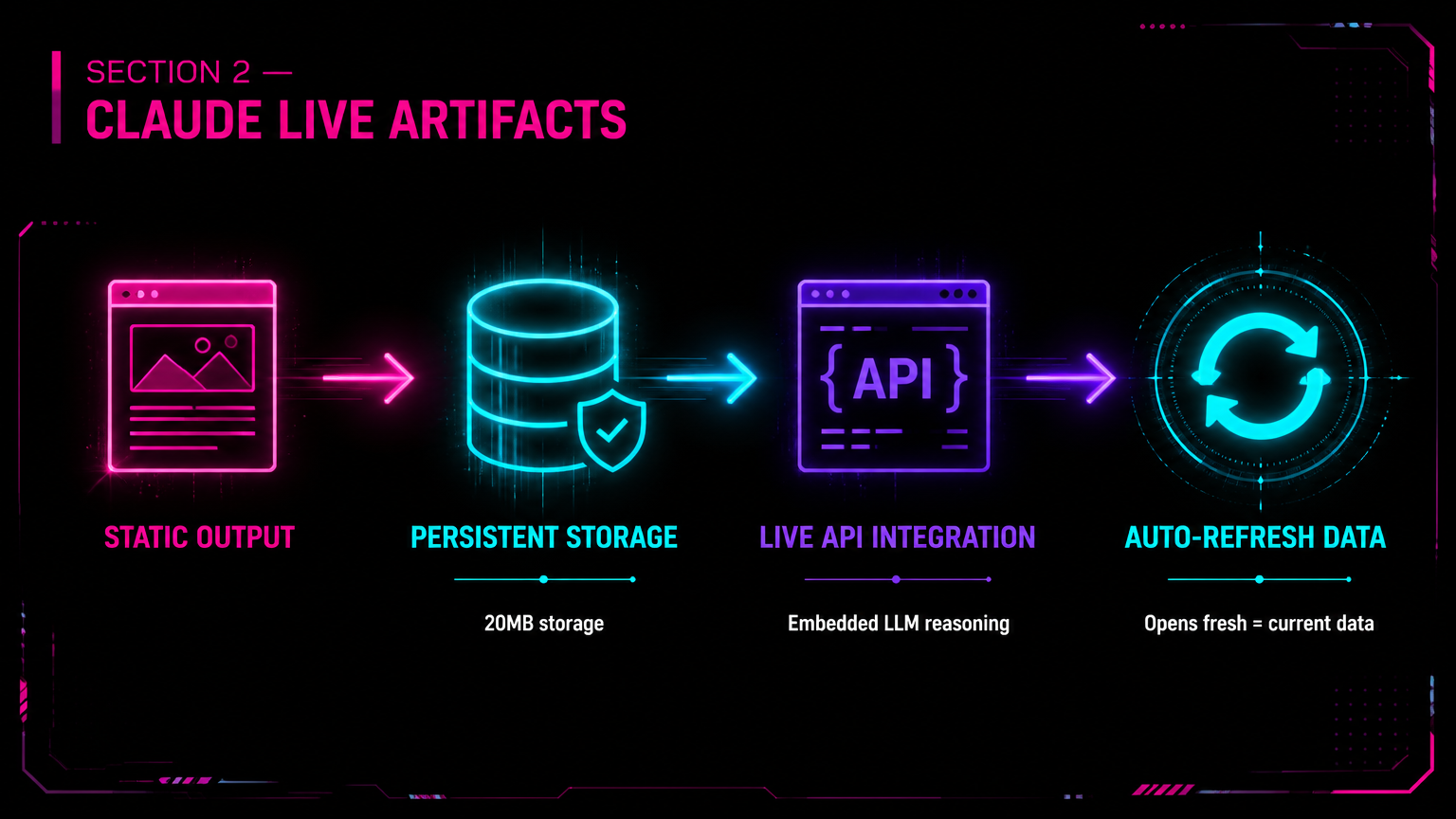

In April 2026, Anthropic released an infrastructure-level update to the Claude Cowork desktop app: Live Artifacts (Source: Anthropic). Previously, Claude Artifacts generated static code previews, documents, or graphics that disappeared into the chat history once a session ended. Live Artifacts transform Claude from a conversational chatbot into a persistent micro-app development environment.

Live Artifacts operate on three core upgrades:

- Persistent Storage: Artifacts now retain state across sessions, offering up to 20MB of storage per artifact on Pro, Max, Team, and Enterprise plans. This allows for dynamic dashboards that "remember" filters, date ranges, and custom views.

- Live API Integration: Artifacts can now embed Claude's reasoning engine directly within the tool itself, calling Claude's API to analyze data on the fly rather than relying on static front-end code (Source: eigent.ai).

- Auto-Refreshing Data: A dashboard built as a Live Artifact stays connected to your data sources. When you reopen the artifact days or weeks later, it automatically pulls and re-renders the most current data without requiring manual updates (Source: The AI Night).

Anastasia Kotsiubynska, writing on LinkedIn on April 22, 2026, was among the first SEO practitioners to publicly surface this capability: "You can now build live SEO dashboards directly in Claude — and they update automatically." Her post noted that the combination of Live Artifacts and active MCP server connections makes it possible to create a self-refreshing ranking tracker, a content gap detector, and a citation monitor — all inside a single Claude conversation window. This corroborated what Oleksii Khoroshun had independently demonstrated the following day with his SE Ranking + GA4 recipe (Source: LinkedIn / Anastasia Kotsiubynska).

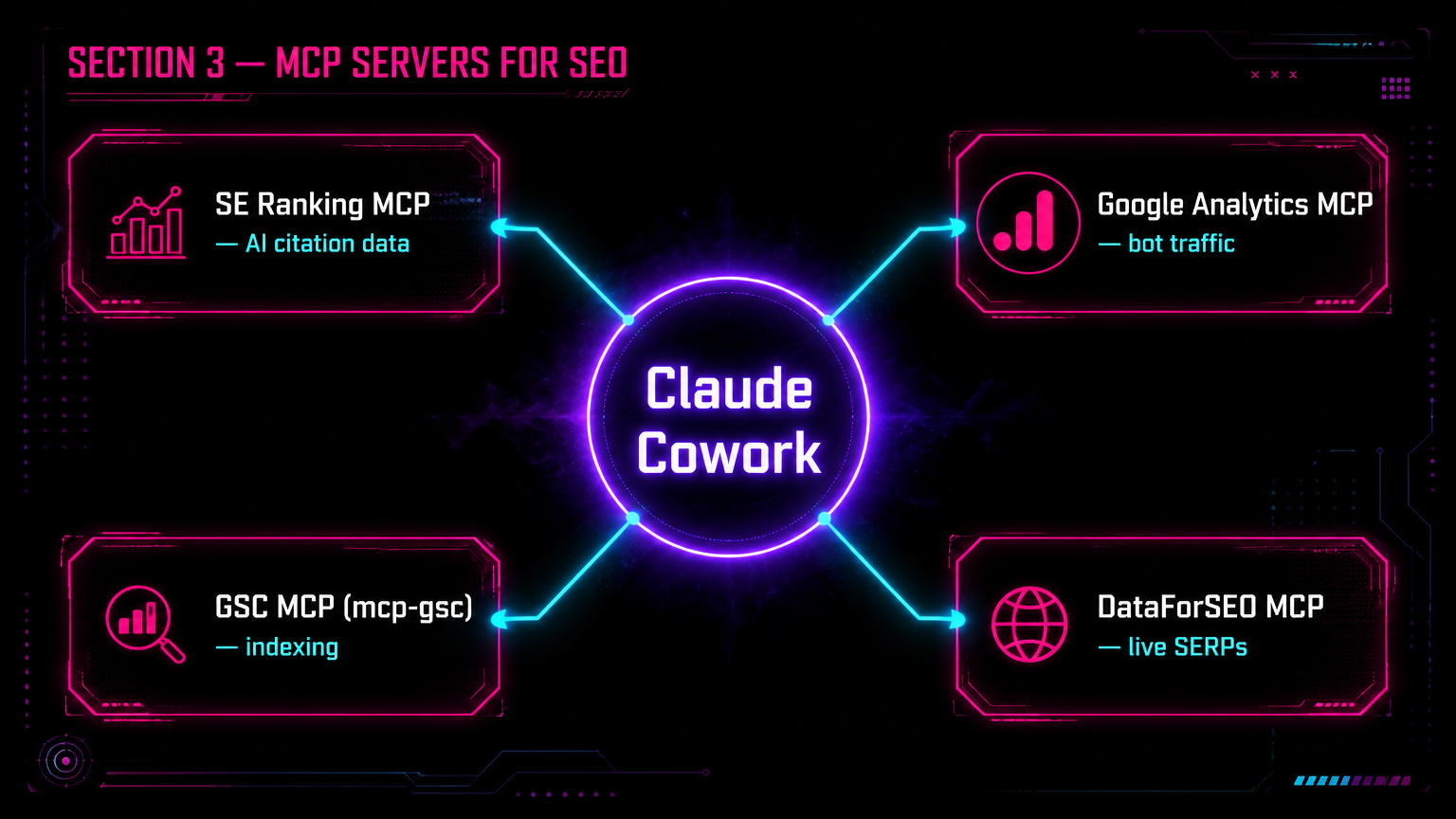

MCP Servers for SEO: Connecting Claude to Live Data

The bridge between Claude's front-end Live Artifacts and your backend SEO data is the Model Context Protocol (MCP). Developed by Anthropic, MCP is an open standard that replaces custom, fragile API integrations with a unified protocol, allowing AI assistants to securely fetch live data from external tools and databases.

By running MCP servers locally or remotely, SEOs can grant Claude direct access to their entire tech stack. As Burkan Bur, Head of SEO at The Ad Firm, explains: "The normal 15 to 20 minute cycle of exporting CSVs and reformatting spreadsheets is replaced with a single sentence typed into a chat window. You inquire about your site and the AI goes and gets the answer from your real data." (Source: SEOptimer)

Key SEO MCP servers available in 2026 include:

- SE Ranking MCP: Provides direct access to SE Ranking's massive datasets, including competitive research, keyword tracking, and their AI Search Toolkit (which monitors visibility across AI Overviews, Gemini, and Perplexity) (Source: SE Ranking).

- Google Search Console MCP (mcp-gsc): An open-source server that pulls search analytics, inspects indexing issues, and allows Claude to visualize click-through-rate decay over custom time periods (Source: SEOptimer).

- Google Analytics MCP Server: Officially maintained by Google, this server connects GA4 data directly to the LLM, enabling rapid extraction of session data and user engagement metrics (Source: Anthropic integrations).

- DataForSEO MCP: Connects to real-time SERP data across Google, Bing, and Baidu, offering access to keyword difficulty scores and backlink profiles (Source: SEOptimer).

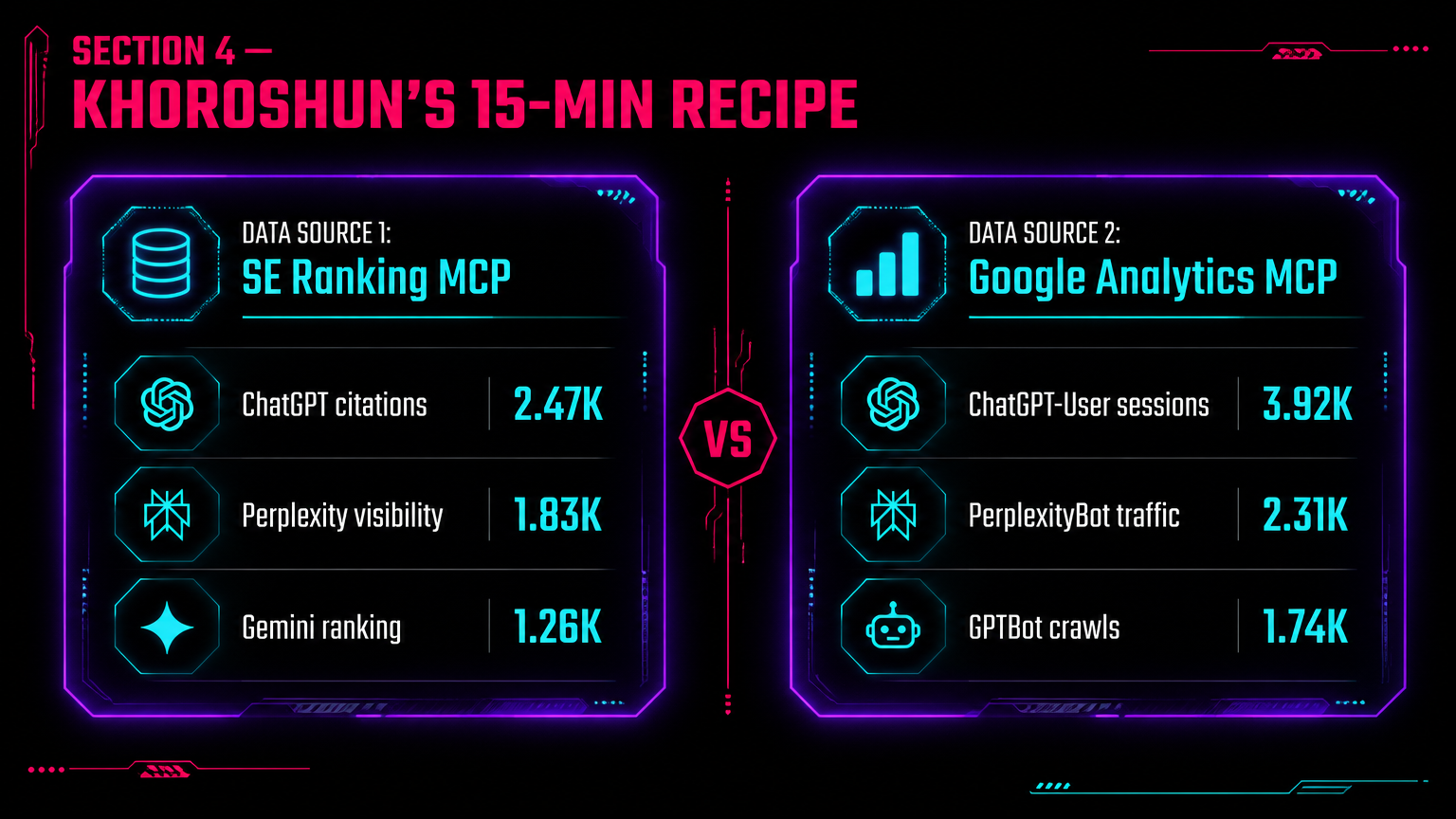

Oleksii Khoroshun's 15-Minute Recipe: The Exact Setup

On April 23, 2026, Oleksii Khoroshun — an SEO specialist at SE Ranking — published a LinkedIn post that immediately circulated through the GEO practitioner community. His headline: "It took me 15 minutes to build an AI search performance dashboard in Claude." The recipe combines two live data connections: the official Google Analytics MCP server and the SE Ranking MCP (Source: LinkedIn / Oleksii Khoroshun).

The data architecture is straightforward:

- Data Source 1 — SE Ranking MCP: Feeds AI search visibility scores — showing exactly how often and where your brand is cited by ChatGPT, Perplexity, Gemini, and Google AI Overviews. SE Ranking's AI Search Toolkit covers all major platforms and can be queried in natural language via MCP.

- Data Source 2 — Google Analytics MCP: Filters sessions by known AI user-agent strings (ChatGPT-User, PerplexityBot, Claude-Web, GPTBot) to attribute AI-driven referral traffic directly to specific pages and revenue events.

Connected inside Claude Cowork with Live Artifacts enabled, these two streams produce a single, auto-refreshing command center: citation share on the left, referral traffic attribution on the right.

Building the AI Search Performance Dashboard: Step-by-Step

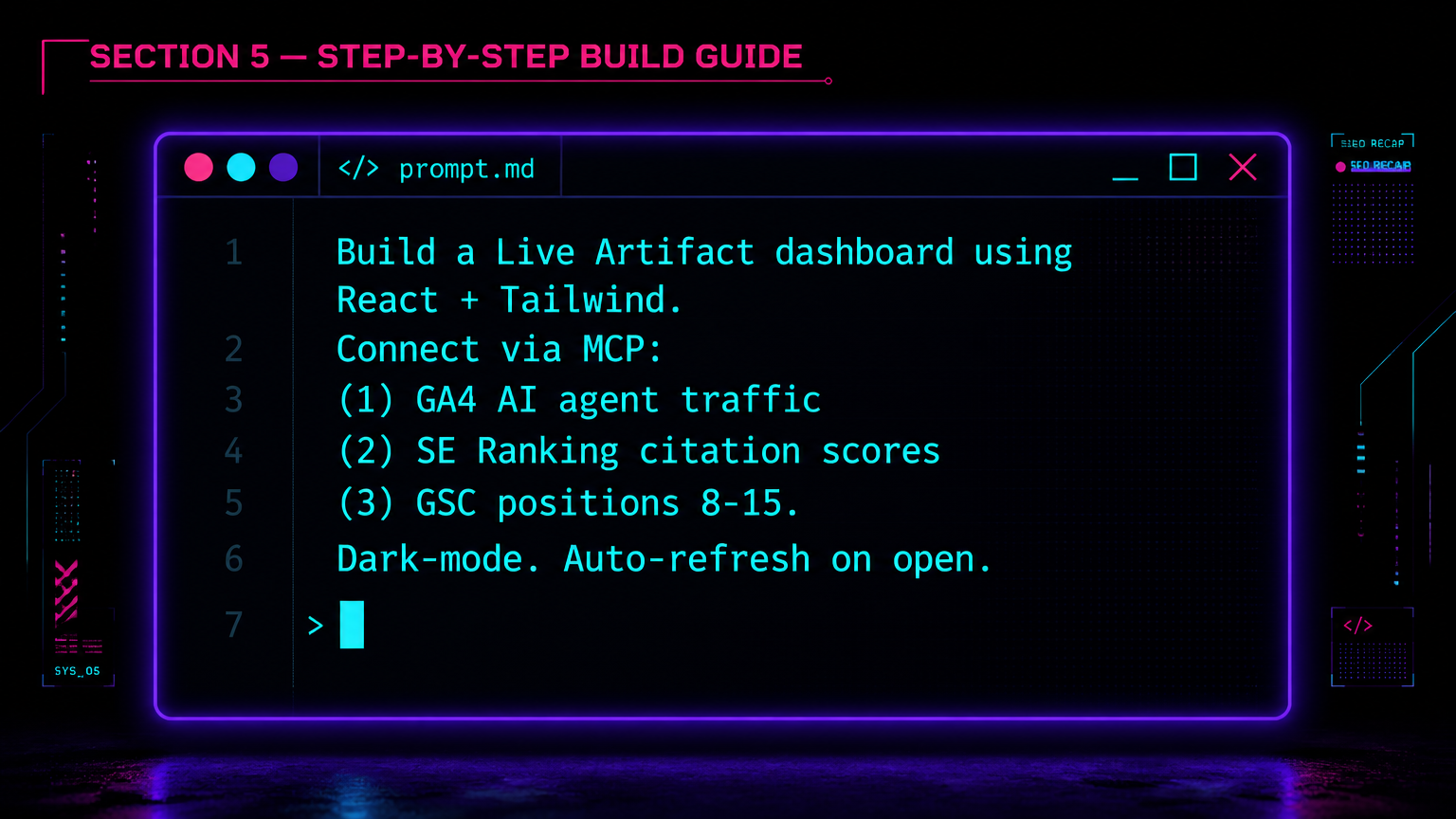

Here is the complete execution sequence, synthesized from Khoroshun's recipe and the Duke Digital Media Community's 30-Minute Dashboard framework (Source: Duke DDMC):

- Initialize the Environment: Open Claude Cowork. Ensure that Live Artifacts are enabled in your settings (under Capabilities). Confirm that your MCP servers for Google Analytics, Google Search Console, and SE Ranking are running and configured as MCP Clients within your Claude Desktop host.

- Verify MCP Connections: Type

/toolsin Claude to confirm all MCP servers are active and returning data. You should see your SE Ranking and GA4 tools listed as callable functions. - Architect the Prompt: Use a highly specific prompt architecture to define the state, the UI framework (React/Tailwind), and the data connections. Using another AI like Gemini to pre-generate a strict spec sheet prevents Claude from inserting marketing clichés or brittle code.

- The Core Prompt Template:

"You are an expert React developer and technical SEO. Build a single-page Live Artifact dashboard using React and Tailwind CSS. The dashboard must connect via my active MCP servers to pull: (1) GA4 traffic filtered by known AI user agents (ChatGPT-User, PerplexityBot, Claude-Web), (2) SE Ranking AI Search Toolkit visibility scores for my brand across ChatGPT, Perplexity, and Gemini, and (3) Google Search Console impression data for 'almost ranking' queries (positions 8–15). Design a dark-mode interface with real-time tracking charts, a citation-share trend line, and a bot-traffic attribution table. The data must auto-refresh via MCP whenever the artifact is opened."

- Render and Activate: Claude will generate the underlying HTML, CSS, and JavaScript, rendering a Preview tab and a Code tab. Once the artifact appears in the right-hand panel, toggle the status to "Live." This connects the Model Context Protocol, allowing the dashboard to reach out to your SE Ranking and GA4 accounts to pull the latest metrics in real time.

- Iterate and Refine: Use Claude's component-highlighting feature to edit specific sections. Highlight the GA4 traffic chart and instruct: "Add a 30-day trailing comparison line." Claude rewrites only the selected block without disrupting the rest of the application.

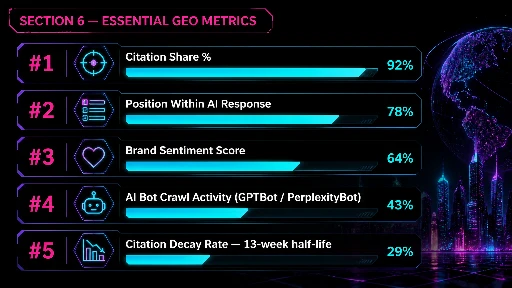

Essential Metrics to Track for GEO and AI Search

Traditional SEO dashboards track clicks, bounce rates, and keyword rankings. A modern LLM-native dashboard must measure semantic authority and citation likelihood. When constructing your Live Artifact, ensure it tracks these KPIs:

- Visibility Percentage / Citation Share: The percentage of relevant AI search responses (across ChatGPT, Perplexity, Gemini, and Google AI Overviews) that explicitly cite your brand or content (Source: SE Ranking AI Search Toolkit; AI Labs Audit).

- Position Within AI Response: Where your brand appears within the AI answer. Being the first recommendation in an AI-generated list yields disproportionately higher exposure than being fifth.

- Brand Sentiment: How the AI describes your brand. LLMs synthesize sentiment from across the web; tracking whether mentions are positive, neutral, or negative is critical for evaluation-stage prompts (e.g., "Is Software X reliable?").

- AI Bot Crawl Activity: Track real-time server hits from

GPTBot,ClaudeBot, andPerplexityBotusing log analyzers or tools like AI Labs Audit. Clients unknowingly blocking these agents inrobots.txtare invisible to conversational interfaces (Source: AI Labs Audit). - Citation Decay Rate: Research shows 50% of content cited in AI answers is less than 13 weeks old (Source: Frase). Tracking the age of your cited statistics ensures you can refresh them before a competitor displaces you.

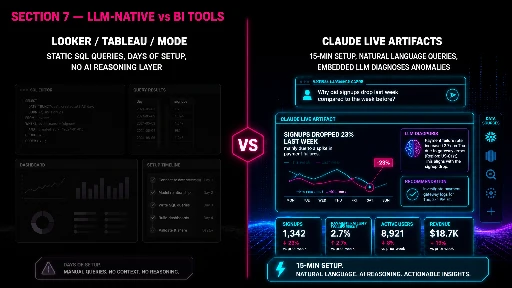

LLM-Native Dashboards vs. Traditional BI Tools

The broader trend in 2026 is the transition toward "Agentic BI." Traditional Business Intelligence tools like Looker, Tableau, and Mode were built for a pre-AI world: extensive data engineering, static SQL queries, rigid dashboard structures. They remain powerful for querying multi-terabyte data warehouses with complex join logic, and for enterprise-wide financial reporting where governance and audit trails are mandatory.

Where LLM-native dashboards (like Claude Live Artifacts) win is in reasoning fluidity. A Live Artifact is not just a visual layer — it contains an embedded AI reasoning engine (Source: eigent.ai). Instead of merely displaying a chart showing a 10% drop in AI citation share, an SEO practitioner can ask the Live Artifact directly: "Why did our Perplexity citations drop this week?" The embedded Claude API queries the SE Ranking MCP, cross-references it with recent content updates, and outputs a diagnostic answer. That capability doesn't exist in Looker.

| Dimension | Looker / Tableau / Mode | Claude Live Artifacts + MCP |

|---|---|---|

| Setup Time | Days to weeks (data modeling required) | 15–30 minutes (prompt-driven) |

| Query Interface | SQL / LookML / drag-and-drop | Natural language |

| Reasoning Layer | None (visualization only) | Embedded LLM — can diagnose anomalies |

| Data Scale | Multi-terabyte warehouse queries | Constrained by MCP rate limits + context window |

| Governance / Audit | Enterprise-grade (SOC 2, RBAC) | Evolving — requires manual security policy |

| AI Citation Metrics | Not supported natively | First-class via SE Ranking / AI Labs Audit MCP |

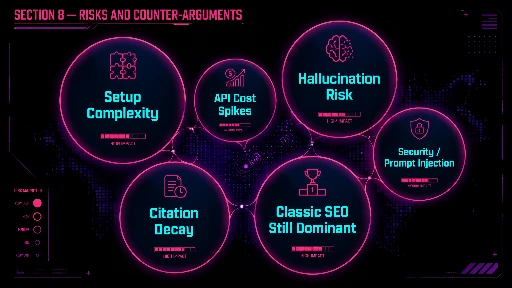

Risks, Limitations, and Counter-Arguments in Agentic SEO

While the integration of Claude Live Artifacts and MCP servers offers a step-change in SEO ops, the landscape is not without friction.

Setup Complexity and API Costs: Configuring API keys, running local MCP servers, and managing secure JSON connectors can be prohibitive for non-technical marketers. Heavy data queries executed by an autonomous AI agent can rapidly consume API credits, leading to unexpected billing spikes.

Security and Privacy Risks: Live Artifacts that continuously read from your screen or local environment carry inherent risks. Unencrypted local memory files and rapid rate-limit drains have been observed in similar systems. Connecting sensitive internal CRM or analytics data via MCP requires strict governance to prevent accidental data leakage or prompt injection vulnerabilities.

The Hallucination Factor: AI interpretation has limits. While Claude can parse a massive CSV of keyword data, it may occasionally misinterpret correlations or provide oversimplified recommendations. Human oversight remains mandatory. Experts warn that AI models can make errors in reasoning, and deploying fixes autonomously without a human-in-the-loop can damage a site's technical health.

Citation Decay: A major counter-argument to heavy GEO investment is the ephemeral nature of AI citations. "Citation decay has three causes: statistical decay, structural decay, and competitive decay." (Source: Frase) Unlike a high-quality backlink that may provide SEO value for years, an AI citation must be continuously defended through aggressive content refreshing.

The "Not All SEO Traffic Is Replaceable" Argument: Some practitioners argue that chasing AI citation share over classic organic optimization is premature. According to SE Ranking's 2025 AI traffic analysis, DeepSeek holds only 0.37% of AI traffic and Claude only 0.17% (Source: SE Ranking). For most industries, Google organic still dominates, and abandoning foundational SEO for GEO is a high-risk pivot.

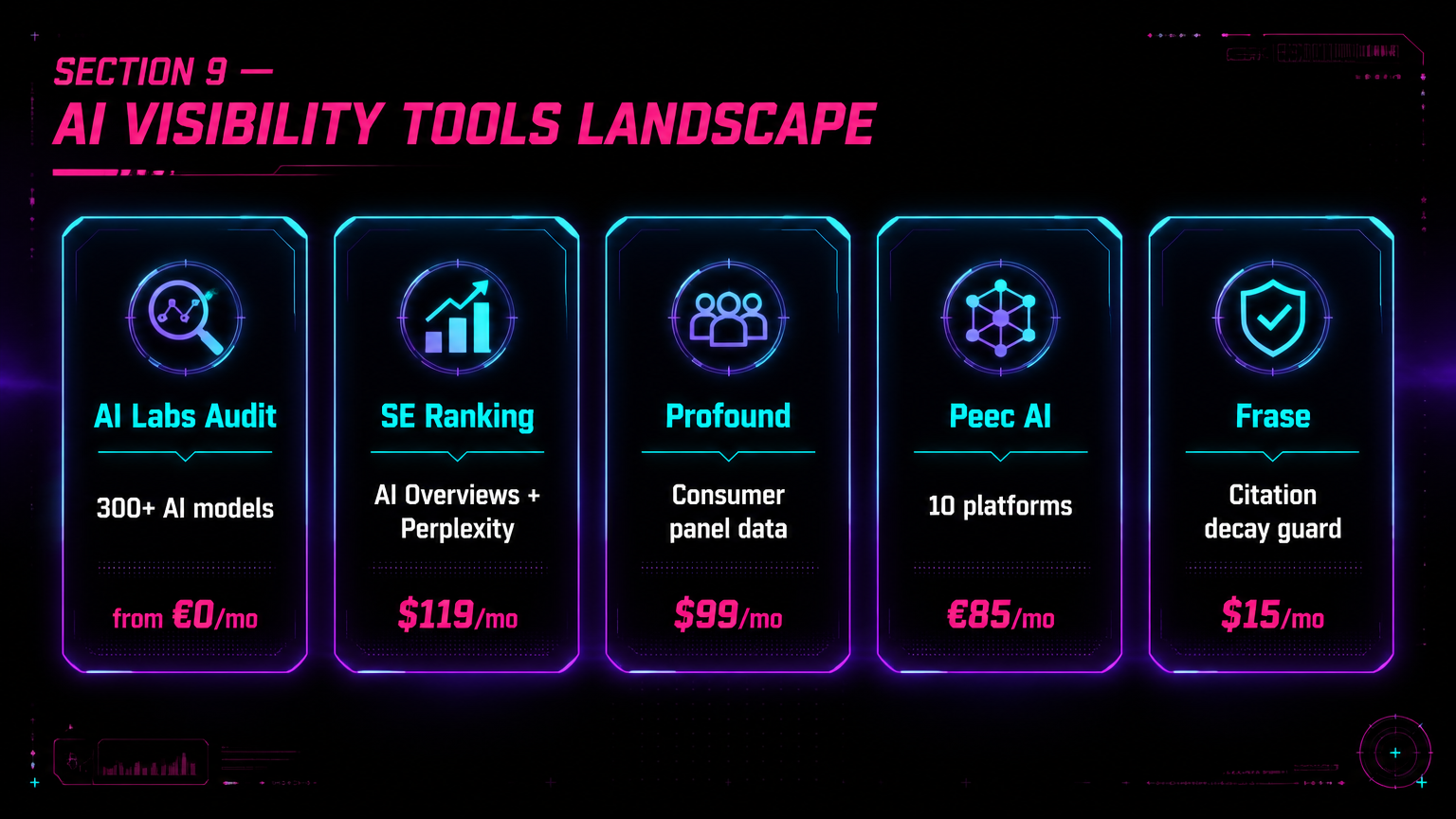

The Competitive Landscape of AI Search Visibility Tools

For organizations lacking the technical resources to build a custom Claude Live Artifact dashboard, a robust ecosystem of off-the-shelf AI visibility tools has emerged in 2026:

| Platform | Key Differentiators | Pricing |

|---|---|---|

| AI Labs Audit | 300+ AI models queried simultaneously; native open-source AI bot tracker; own MCP server with 94 tools (Source: AI Labs Audit) | From €0 / €69 per month |

| SE Ranking AI Toolkit | All-in-one SEO + GEO; covers AI Overviews, AI Mode, ChatGPT, Gemini, Perplexity; robust MCP integration (Source: SE Ranking) | From $119 per month |

| Profound | Double opt-in consumer panel data (not synthetic API estimates); SOC 2 / GDPR / CCPA compliant (Source: AI Labs Audit comparison) | From $99 per month |

| Peec AI | 10 platforms tracked; proprietary "Actions" module converts data into scored remediation to-do lists; MCP server included (Source: AI Labs Audit comparison) | From €85 per month |

| Frase | Closed-loop "Content Guard" autonomously detects and fixes citation decay; integrated GEO content editor (Source: Frase) | From $15 per month |

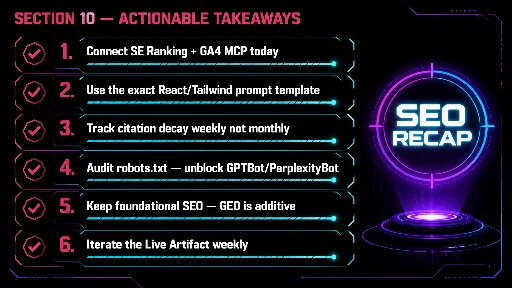

Actionable Takeaways for SEO Practitioners

- Start with the SE Ranking + GA4 MCP stack today. Both MCP servers are available and documented. Even without a Live Artifact, connecting Claude to live GSC and rank-tracking data eliminates the CSV-export cycle immediately.

- Use the prompt template verbatim. The specificity of the prompt (React, Tailwind, dark-mode, named MCP data sources) is what prevents Claude from generating a generic, unusable dashboard skeleton.

- Track citation decay weekly, not monthly. With a 13-week half-life on AI citations, monthly reporting cycles miss the window for intervention. Build a weekly citation-refresh cadence into your editorial calendar.

- Block no AI bots — audit your robots.txt immediately. Any disallow rules for

GPTBot,ClaudeBot, orPerplexityBotare directly harming your AI search visibility. Audit and remove them. - Don't abandon traditional SEO for GEO. SE Ranking's traffic data confirms AI platforms still account for a small fraction of total web traffic. Build GEO as an additive layer, not a replacement strategy.

- Treat the Live Artifact as a living document. Iterate on it weekly. Add new metrics (e.g., brand sentiment scoring), new data sources (e.g., Perplexity API), and new visualizations as the AI search landscape evolves.

FAQ: Generative Engine Optimization & MCP Servers

-

What is Generative Engine Optimization (GEO)?

GEO (also known as AEO or LLMO) is the practice of structuring and enhancing content to maximize its likelihood of being cited as a source when AI platforms (like ChatGPT, Perplexity, or Google AI Overviews) generate responses to user queries (Source: Frase). It shifts the focus from winning clicks to establishing semantic authority. -

What does MCP stand for, and why is it important for SEO?

MCP stands for Model Context Protocol. It is an open standard developed by Anthropic that allows AI assistants to securely connect to external databases, tools, and APIs (Source: SEOptimer). For SEOs, it eliminates manual CSV exports, allowing Claude to query live data from Google Search Console, GA4, or rank trackers using natural language. -

How do Claude Live Artifacts handle state and memory?

Unlike previous iterations of Artifacts that reset when closed, Live Artifacts feature persistent storage of up to 20MB per artifact. This allows the application to remember user inputs, custom filters, and data states across multiple sessions (Source: eigent.ai). -

What is the primary structural difference between content built for SEO versus GEO?

SEO values long-form content that comprehensively covers a broad topic. GEO requires content to be semantically chunked — each H2 section or paragraph must be self-contained so that an AI engine can extract a specific fact without needing the surrounding context (Source: Frase; stormy.ai). -

How can I track AI search visibility in Google Analytics 4 (GA4)?

Create custom segments in GA4 that filter sessions by known AI user-agent strings, such asChatGPT-User,PerplexityBot,Claude-Web, andGPTBot. With the Google Analytics MCP server active, Claude can pull and analyze this data directly in conversation. -

What is citation decay, and how quickly does it happen?

Citation decay refers to the rapid loss of AI citations when an LLM finds a fresher or more authoritative source. Research indicates that 50% of the content cited in AI search responses is less than 13 weeks old, requiring practitioners to frequently update statistics and refresh content to maintain visibility (Source: Frase). -

Is the 15-minute dashboard approach realistic for non-technical SEOs?

The MCP server setup requires some technical configuration (API keys, local server processes). However, once configured, the Claude Cowork prompt-to-dashboard workflow is genuinely non-code. Non-technical SEOs should plan for a one-time 1–2 hour setup investment, after which iteration is prompt-driven. -

Can I build this dashboard without a paid SE Ranking subscription?

The SE Ranking MCP requires an active SE Ranking account (plans from $119/month). However, you can build a partial version using only the free Google Analytics MCP and the open-source mcp-gsc GSC connector, tracking AI bot traffic and organic performance without the full AI citation visibility layer.