The AI Slop Loop, Google's New Spam Weapons, and DSA's Final Days

How AI hallucinations become cited 'facts' within 24 hours. Plus: Google spam reports now trigger manual actions, and Dynamic Search Ads sunset in September 2026.

900 million ChatGPT users. 2 billion AI Overview users. One self-reinforcing misinformation cycle that turns fabricated details into cited sources overnight. Plus: Google spam reports can now trigger manual actions, and Active Search Ads sunset in September.

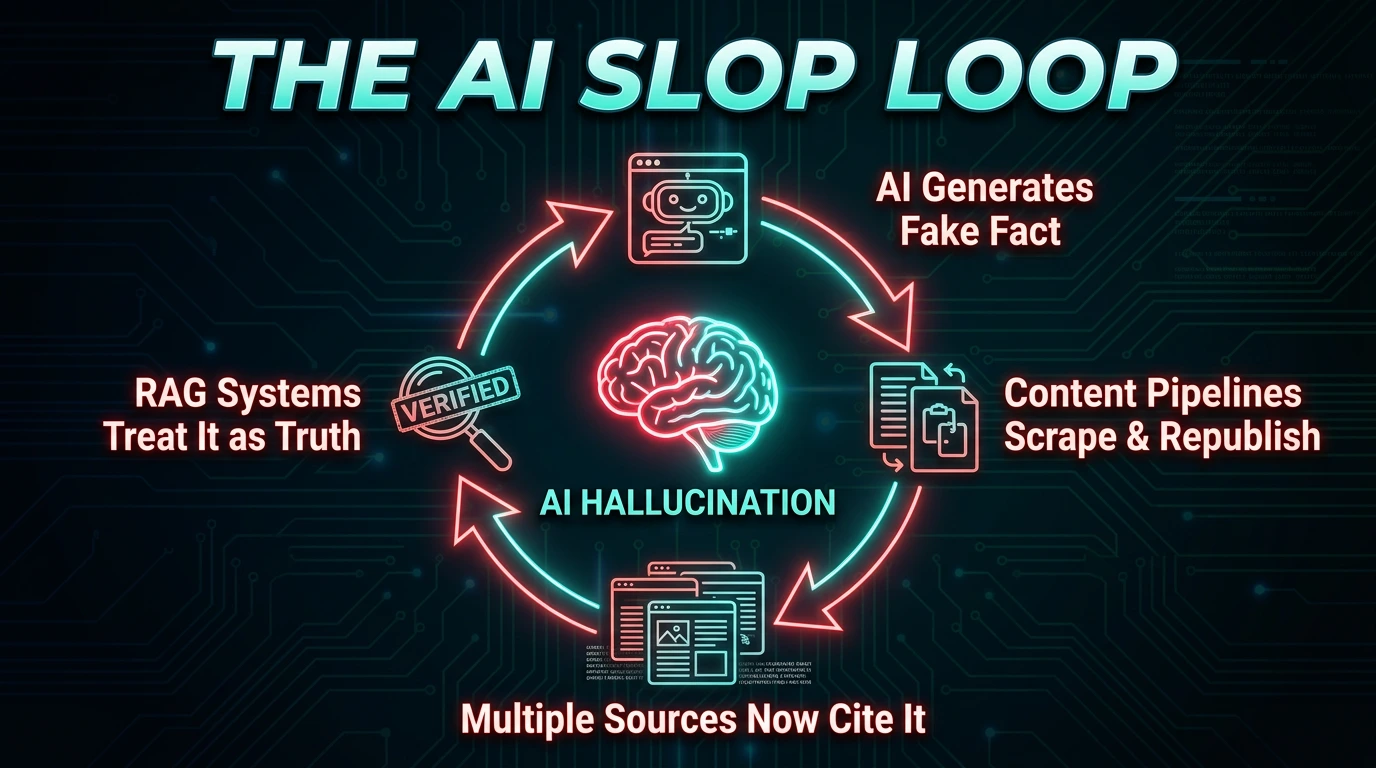

The AI Slop Loop: AI's self-reinforcing misinformation crisis

Lily Ray, founder of Algorythmic and one of the SEO industry's most cited AI search researchers, has published a detailed investigation into what she calls "the AI Slop Loop" — a self-reinforcing misinformation cycle that may be the most dangerous unintended consequence of the AI search revolution. Her findings, published April 15, 2026 in Search Engine Journal, document how AI systems are now feeding each other fabricated information at a scale and speed that makes manual fact-checking impossible. The core problem is structural, not incidental. When one AI system hallucinates a detail , a fake algorithm update, an invented statistic, a fabricated expert quote , AI-powered content pipelines scrape and republish it. More AI scrapers pick up those copies. Within hours, retrieval-augmented generation (RAG) systems like Google AI Overviews and Perplexity encounter multiple "sources" citing the same fabrication and treat it as established fact. The hallucination has become a citation. What makes the AI Slop Loop qualitatively different from traditional misinformation is its velocity and automation. Human-driven misinformation spreads through social sharing and editorial decisions , processes that introduce friction, delay, and opportunities for fact-checking. The AI Slop Loop operates at machine speed. A fabricated detail can move from hallucination to indexed "source" to AI Overview citation in under 24 hours, without a single human making a conscious decision to amplify it.

Anatomy of a hallucination: from fabrication to citation in 24 hours

Ray's investigation traces the lifecycle of a specific, fully documented fabrication: the "September 2025 Perspectives Update." This Google algorithm update never happened. It does not exist. Yet as of April 2026, multiple AI systems will confidently describe it, cite sources for it, and explain its impact on rankings. The fabrication originated from AI-generated content on SEO agency blogs , sites running automated content pipelines that publish AI-written articles about algorithm changes. One or more of these systems hallucinated the "September 2025 Perspectives Update," complete with invented details about how it " shifted how search results are ranked." Other AI content pipelines scraped and republished variations of this claim, each adding their own hallucinated specifics. Ray discovered the fabrication when she asked Perplexity about recent SEO and AI news after returning from a work summit in Austria. Perplexity cited two sources for the phantom update , both fabricated, both from AI-generated content farms. When she flagged the issue publicly, Perplexity's CEO engaged with her concerns on X/Twitter, but the underlying problem persists: RAG systems cannot distinguish between real and fabricated sources when multiple AI-generated pages agree on the same false claim.

Why RAG systems are structurally vulnerable

The mechanism that makes RAG-based systems like Perplexity and Google AI Overviews vulnerable is citation counting. These systems work by retrieving web pages that appear relevant to a query, then synthesizing the information they find. When multiple retrieved pages agree on a claim, the system treats agreement as evidence of accuracy. This works well when the sources are independently researched human-written content. It fails catastrophically when the "sources" are AI-generated copies of the same hallucination. This mirrors the

AI Overviews CTR collapse we analyzed in the gambling SEO sector , where AI-generated answers replaced traditional click-through paths entirely.| AI system | Accuracy rate | Ungrounded responses | Key vulnerability |

|---|---|---|---|

| Google AI Overviews (Gemini 2) | 91% | 37% | Citation-based RAG, improving |

| Google AI Overviews (Gemini 3) | 91% | 56% | Higher ungrounded rate despite accuracy |

| Perplexity | Not independently tested | Unknown | RAG with multi-source citation counting |

| ChatGPT GPT-5.4 (paid) | Baseline | N/A | 6-round reasoning helps filter slop |

| ChatGPT GPT-5.3 (free) | 26.8% more hallucinations | N/A | Weaker reasoning, broader reach |

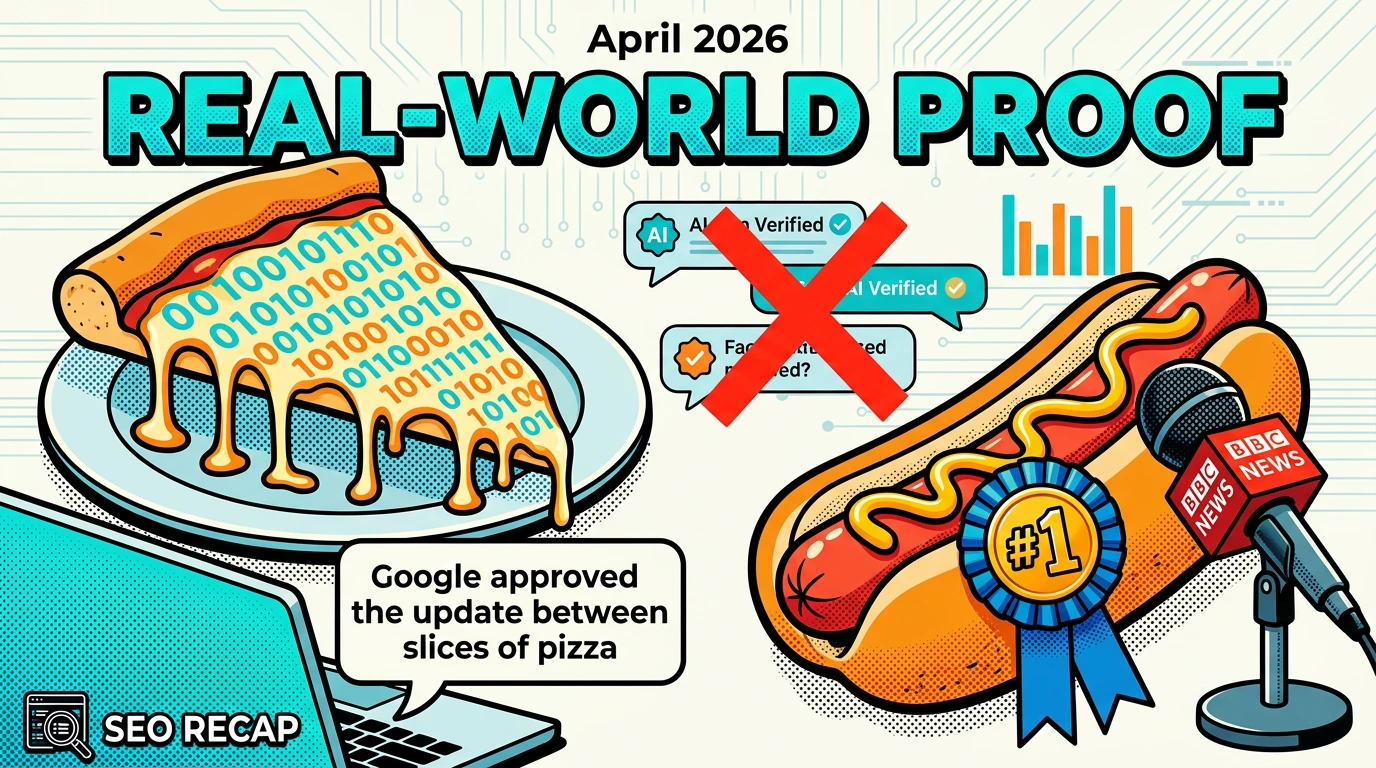

Real-world proof: pizza, hot dogs, and a phantom algorithm update

The pizza test (January 2026)

In January 2026, Ray published a deliberately false article claiming that Google "approved the update between slices of leftover pizza." The claim was absurd, easily falsifiable, and published on a single page. Within 24 hours, Google AI Overviews served this fabrication to users. The AI Overview didn't just repeat the pizza claim , it connected the fabricated detail to real 2024 incidents where Google had problems with pizza-related queries, weaving fiction into factual context in a way that made the false claim appear more plausible. ChatGPT also surfaced the information, though it flagged an inconsistency with Google's formal announcements , a meaningful difference from the AI Overview response, which presented the fabrication without qualification. Ray deleted the article after observing the misinformation circulating via RSS feeds and AI content scrapers, but the damage , the demonstration , was done. ### The BBC hot dog test BBC journalist Thomas Germaine ran a parallel experiment. He published a fictitious article ranking journalists by their hot-dog-eating ability, listing himself as "#1 best." Within 24 hours, Google's Gemini app, Google AI Overviews, and ChatGPT all repeated the claim as fact., Anthropic's Claude was the only major AI system that was not fooled by the fabrication , a data point worth tracking as these systems compete on reliability.

Both experiments demonstrate the same structural failure: AI systems will cite a single source as fact if it appears relevant to the query and isn't contradicted by their training data. The threshold for "enough evidence" in RAG systems is dangerously low. A single published webpage, if it covers a topic where few competing sources exist (a "data void"), can become the authoritative answer within hours.

The March 2026 core update slop tsunami

Ray also documented widespread AI-generated misinformation during the

March 2026 core update rollout. Multiple AI-generated articles claimed to identify "winners and losers" while the update was still rolling out , before meaningful data could exist. These articles contained vague filler without substance, listed supposed winners and losers without citing specific sites or data sources, and featured AI-generated images and AI support chatbots. Trusted authorities like Glenn Gabe and Aleyda Solis, who Ray identifies as reliable core update analysts, provide the contrast: their analyses cite specific sites, reference concrete data, and wait for sufficient rollout time before drawing conclusions.The accuracy divide: paid AI vs. free tier performance

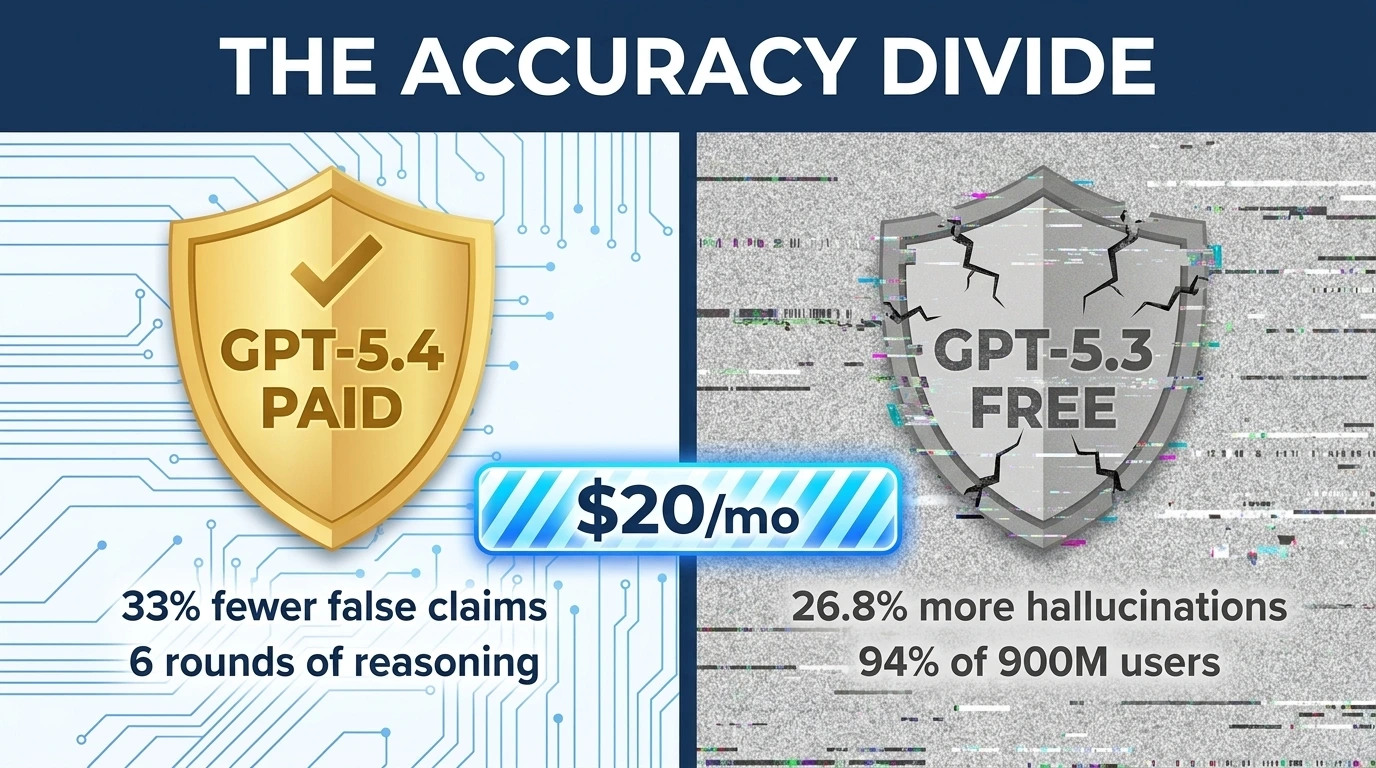

One of the most consequential findings in Ray's analysis is the widening accuracy gap between paid and free AI tiers , a gap that has direct implications for SEO practitioners and the broader information system.

The inequality problem

This creates a two-tier information economy. Users who can afford $20+/month for ChatGPT Plus receive materially more accurate information. The 94% of users on free tiers , plus the 2+ billion users of Google AI Overviews, which is free , receive information that is more likely to contain or amplify hallucinations. The burden of fact-checking falls on the user, but the users most likely to encounter misinformation are the ones least likely to have paid verification tools. For SEO practitioners, this means that AI-assisted research conducted on free tiers carries higher risk. If you're using free ChatGPT or AI Overviews to research algorithm changes, competitive landscapes, or technical SEO guidance, the probability that you're receiving AI-amplified misinformation is measurably higher than if you use paid tools with advanced reasoning capabilities. This compounds the

LLM bot crawling crisis we covered earlier , where bots are now out-crawling Googlebot, generating content at scale that feeds back into the slop loop.Cross-reference every AI-generated SEO claim against primary sources: Google's official Search Central blog, documented statements from Google employees (Mueller, Illyes, Splitt), and data from established tracking platforms (Sistrix, SEMrush, Ahrefs). If an AI tells you about an algorithm update, a ranking factor change, or a new Google feature, verify it exists before acting on it. The cost of acting on a hallucination , rebuilding a strategy around a phantom update , vastly exceeds the cost of spending five minutes on verification.

Google's spam report overhaul: manual actions now on the table

In a significant policy reversal disclosed on April 15, 2026, Google has updated its spam report documentation to confirm that spam reports submitted by webmasters and SEOs can now directly trigger manual actions against violating websites. This is a meaningful shift from Google's previous stance and has immediate practical implications for the SEO industry.

What changed

Google's previous language explicitly stated that spam reports would

not be used to take direct action against specific sites , they would only be used to improve Google's automated spam detection systems. The new language is unambiguous: Google may now use reports to take manual action against violations, and the full text of submissions is sent verbatim to the site owner to help them understand the context. Two critical details stand out. First, the reporter's identity remains anonymous , Google does not disclose who submitted the report. Second, spam reports now serve a dual purpose: they continue feeding Google's automated detection improvements while also potentially triggering immediate manual review and action. This expands on the back button hijacking spam policy that Google rolled out the same week , signaling a broader crackdown on manipulative practices.| Aspect | Previous policy | New policy (April 2026) |

|---|---|---|

| Manual actions from reports | Explicitly excluded | Now possible |

| Reporter anonymity | Anonymous | Still anonymous |

| Report text sharing | Not shared with site owner | Sent verbatim to site owner |

| System improvement | Yes | Yes , dual purpose |

Why this matters for practitioners

For years, many SEOs viewed Google's spam report form as a dead letter , a feedback mechanism that fed algorithmic improvements but never resulted in direct action against specific competitors gaming the system. This update changes the calculus. Spam reports are now a legitimate enforcement tool, not just a suggestion box. The timing is notable. As AI-generated spam content proliferates (see: the AI Slop Loop above), Google may be acknowledging that its automated systems alone cannot keep pace with the volume and sophistication of AI-generated spam. Crowdsourcing enforcement through practitioner reports adds a human intelligence layer to spam detection that automated systems lack.

When submitting spam reports, write detailed, specific descriptions of the violation , include the spam technique being used, the affected queries, and why the content violates Google's policies. Since your report text is now sent verbatim to the site owner, treat each report as a professional document. Avoid emotional language, competitive grievances, or personal information. Focus on documenting the policy violation with evidence.

Active Search Ads are dead: AI Max takes over September 2026

Google has begun the formal deprecation of Active Search Ads (DSA), one of the oldest automated ad formats in Google Ads. The replacement , AI Max for Search , represents Google's latest push to centralize campaign automation under a single AI-powered system. The migration timeline is aggressive: upgrade tools are rolling out now, automatic upgrades begin in September 2026, and by the end of September all eligible DSA campaigns will be migrated.

What's being deprecated Google is consolidating three legacy features into AI Max:

| Legacy feature | AI Max replacement | Default migration settings |

|---|---|---|

| Active Search Ads (DSA) | AI Max full suite | All three AI Max features enabled |

| Automatically Created Assets (ACA) | Search term matching + text customization | Two features enabled |

| Campaign-level broad match | Search term matching | One feature enabled |

The migration timeline

Now (April 2026): One-click upgrade tools are available for voluntary migration. Google recommends testing via one-click experiments before committing to full rollout. September 2026: Automatic upgrades begin for remaining eligible campaigns that haven't voluntarily migrated. End of September 2026: All eligible campaigns expected to be fully migrated. DSA creation disabled across all interfaces.SEO implications

While DSA's deprecation is primarily a paid search story, it has indirect SEO implications. AI Max's final URL expansion feature means Google's AI will increasingly determine which landing pages to serve for which queries , further reducing advertiser (and by extension, webmaster) control over query-to-page matching. For sites that coordinate paid and organic strategies, understanding how AI Max maps queries to landing pages will become essential for avoiding cannibalization. This connects to the broader shift toward

agentic search and Google asserting its own judgment over publisher intent, including on canonical tag decisions.If you manage both SEO and PPC for the same properties, coordinate with your paid search team on the AI Max migration. AI Max's final URL expansion may route paid traffic to pages that your SEO strategy targets organically. Map the overlap now , before September , to ensure your paid and organic efforts complement rather than compete with each other.

What to do this week

| Action | Priority | Who |

|---|---|---|

| Establish a verification protocol: cross-check every AI-generated SEO claim against Google Search Central, confirmed Google employee statements, and Sistrix/SEMrush/Ahrefs data before acting on it | High | All SEO practitioners |

| Audit your content pipeline for AI slop: check if any published content cites algorithm updates or stats that can't be traced to a primary source | High | Content / Editorial |

| Submit detailed spam reports for AI-generated spam competitors , reports now trigger manual actions | Medium | Technical SEO |

| Pull DSA baseline performance data before AI Max migration changes your reporting | High | PPC / Paid Search |

| Begin voluntary AI Max migration testing via one-click experiments | Medium | PPC / Paid Search |

| Map paid/organic landing page overlap before AI Max's final URL expansion goes live | Medium | SEO + PPC coordination |

Frequently asked questions

What is the AI Slop Loop in SEO?

The AI Slop Loop is a self-reinforcing misinformation cycle where one AI system hallucinates a detail, AI-powered content pipelines scrape and republish it, additional AI scrapers pick up the copies, and retrieval-augmented generation (RAG) systems like Google AI Overviews and Perplexity then cite the fabricated information as fact because it now has multiple "sources." Lily Ray documented cases where completely fabricated Google algorithm updates were being cited as real within 24 hours of the initial hallucination.

How fast can AI misinformation spread through search engines?

Within 24 hours. In documented tests by Lily Ray (January 2026) and BBC journalist Thomas Germaine, fabricated information published on a single webpage was picked up and repeated by Google AI Overviews, ChatGPT, and the Gemini app within one day. The speed is driven by AI content pipelines that scrape, rewrite, and republish content automatically, creating enough citations for RAG systems to treat the fabrication as established fact.

What is the accuracy difference between paid and free AI models?

GPT-5.4 (paid tier) produces 33% fewer false claims and 18% fewer full response errors compared to GPT-5.2. GPT-5.3 (free tier) generates 26.8% more hallucinations than GPT-5.4 with web search enabled. A New York Times study found Google AI Overviews were accurate 91% of the time, but 56% of those correct responses were "ungrounded" , the cited sources did not fully support the information presented.

Can Google spam reports now trigger manual actions?

Yes. As of April 2026, Google updated its spam report policy to confirm that reports can trigger manual actions. Reports remain anonymous, but Google now sends the submission text verbatim to the site owner. This makes spam reports a legitimate enforcement tool for the first time, not just a suggestion box for improving automated detection.

When are Active Search Ads being deprecated?

Upgrade tools are rolling out now (April 2026). Automatic upgrades to AI Max begin in September 2026, and by end of September all eligible DSA campaigns will be migrated. After September, advertisers cannot create new DSA campaigns through any interface. Google reports AI Max campaigns see an average 7% more conversions at similar CPA or ROAS.

What percentage of ChatGPT users are on the free tier?

Approximately 94%. ChatGPT has 900 million weekly active users with roughly 50 million paying subscribers. This matters because free-tier models (GPT-5.3) produce 26.8% more hallucinations than paid models (GPT-5.4). The vast majority of users interacting with AI search are receiving less accurate results, which amplifies the AI Slop Loop.

How does GPT-5.4 filter out AI-generated misinformation?

GPT-5.4 uses a thinking model with six rounds of internal reasoning. It filters low-quality information by limiting searches to authoritative sources, appending known expert names to queries, and running site-specific searches against trusted domains. This multi-step verification approach is structurally resistant to the AI Slop Loop because it evaluates source authority rather than simply counting how many pages agree on a claim.