March 2026 Core Update Aftermath, Ask Maps Revolution, and the 11-Month GSC Bug

Deep analysis of the March 2026 core update winners and losers, Google's Ask Maps Gemini-powered local search, the 11-month GSC impressions bug, Googlebot's 2MB ceiling, Universal Commerce Protocol onboarding, and Mueller on outbound link spam.

Watch the Short Before You Read

The three biggest stories of the week in under a minute — core update, Ask Maps, and the 11-month GSC bug.

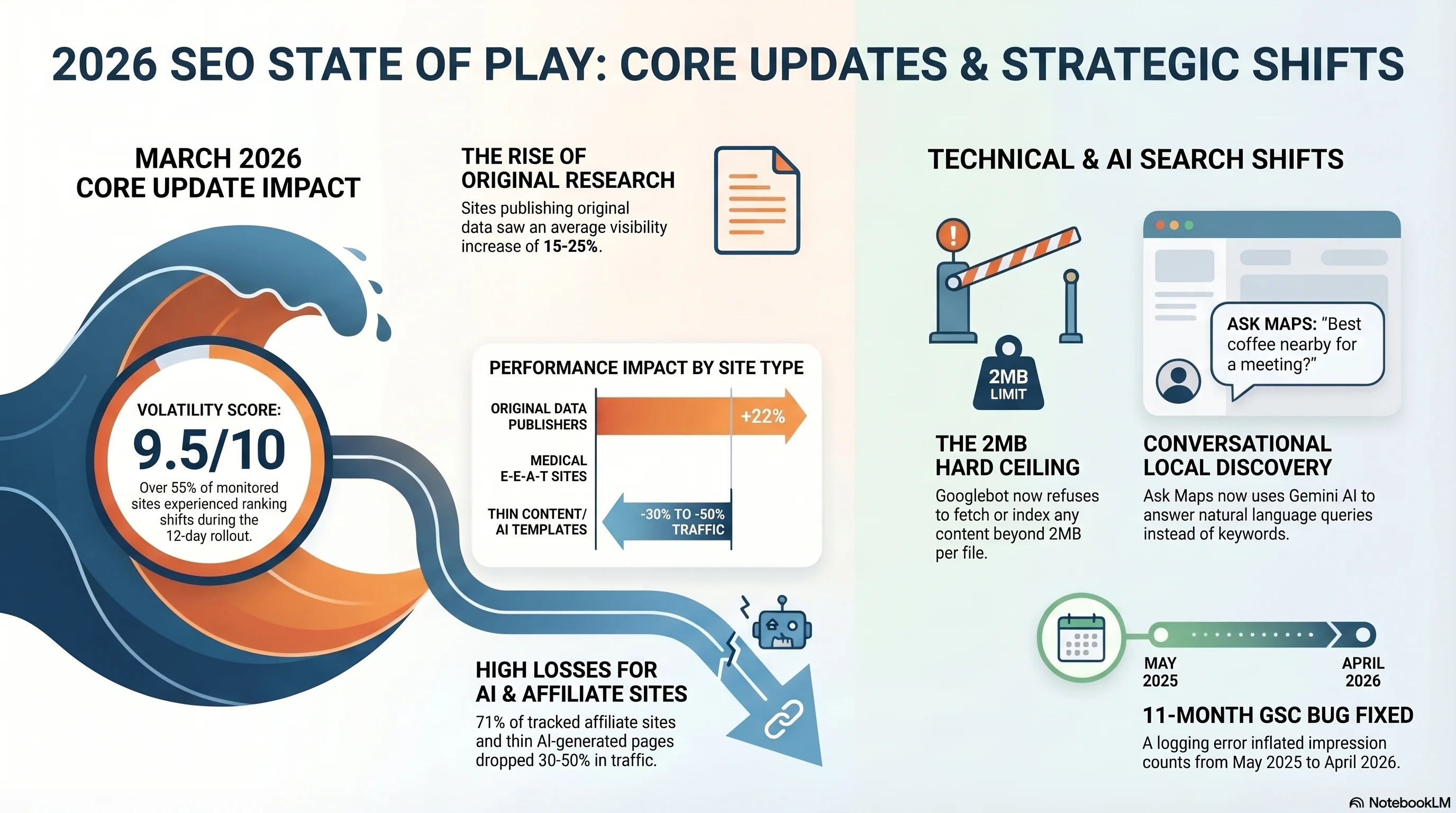

1. March 2026 Core Update: The Full Impact Report

The March 2026 core update , Google's first broad core update of the year , began rolling out on March 27 and completed on April 8, spanning 12 days and 4 hours. Google described it as "a regular update" and did not publish a companion blog post or announce specific goals, yet the data paints a picture of anything but routine.Who Won , and Why

The clearest pattern among sites that gained visibility is original, first-party research. According to analysis covering more than 600,000 pages, sites publishing original datasets saw average visibility increases of approximately 22%. This reflects Google's intensified weighting on what the search quality rater guidelines call "Information Gain" , measuring how much genuinely new knowledge a page adds compared to the existing top-ranking results for the same query. Medical and health sites with board-certified contributor networks, peer-reviewed sourcing practices, and transparent editorial review processes saw especially strong gains, with some reporting 15–25% visibility improvements. The common thread across winning sites is demonstrable expertise tied to verifiable credentials and first-hand experience. News publishers also saw notable gains. According to Sistrix data, The Guardian, Money Saving Expert, Substack, and The New York Times were among the biggest winners from this update , all sites with strong editorial identity and original reporting practices. ### Who Lost , and Why Affiliate comparison sites and thin content aggregators were hit hardest. Data from multiple tracking platforms shows that 71% of affiliate sites monitored experienced negative visibility changes, with thin content and templated AI-generated pages dropping between 30% and 50% in organic visibility. HubSpot's blog, which had published at volume across topics well outside its core expertise, reportedly lost an estimated 70–80% of organic traffic , a case study in how topical authority failures compound during core updates.

| Category | Visibility Change | Key Factor |

|---|---|---|

| Original Research Sites | +15% to +25% | Information Gain, first-party data |

| Medical E-E-A-T Sites | +15% to +25% | Board-certified authors, peer-reviewed sources |

| Original Data Publishers | +22% average | Unique datasets and analysis |

| Quality News Publishers | Modest gains | Editorial identity, original reporting |

| Affiliate Comparison Sites | -30% to -50% | Thin content, templated output |

| Off-Topic Volume Publishers | -70% to -80% | Topical authority dilution |

| AI-Generated Template Content | -30% to -50% | No Information Gain, commodity content |

Practitioner Insight

This update marks a pivot point where E-E-A-T has shifted from a content quality guideline to a measurable ranking factor with direct, observable impact on positions. If your content strategy relies on volume-first publishing across peripheral topics, this update is a clear signal to consolidate around areas where you can demonstrate genuine expertise.

What Changed Under the Hood

Two algorithmic shifts stand out. First, Information Gain scoring received significant amplification. Google now appears to actively reward pages that introduce data, perspectives, or analysis not present in competing content , not just pages that comprehensively cover a topic. Second, the update brought stronger entity-based authority signals, meaning that Google is increasingly connecting content quality to the verifiable expertise of named authors and organizations rather than relying solely on domain-level authority metrics. For practitioners, the implication is clear: the bar for ranking with commodity content has risen substantially. Pages that merely rephrase what already exists in the top ten results face an uphill battle that will only steepen with each successive update. This is exactly the gap a disciplined

content marketing program is built to close , original research, primary data, and named-author expertise.2. Ask Maps: How Gemini AI Is Rewriting Local Search

Google's Ask Maps feature, powered by Gemini AI, has moved beyond limited testing and is now available to all users in the United States and India on both iOS and Android, with desktop availability expected to follow. This is not a minor interface refresh , it represents a fundamental shift in how users discover local businesses.What This Means for

Local SEO Ask Maps processes queries with a level of nuance that keyword-based search never could. When a user asks for "a coffee shop with a cozy vibe," Gemini interprets "cozy" based on that user's saved places, previous likes, and behavior patterns. The system creates a personalized definition of ambiguous qualitative terms , making review sentiment, photo quality, and business attribute completeness far more important than they have ever been. For businesses optimizing their local presence, the practical priorities are shifting. Traditional local SEO focused on Google Business Profile completeness, citation consistency, and review quantity. Ask Maps adds three new dimensions that practitioners must now address , the same dimensions we prioritize in every

local SEO engagement. First, review depth and sentiment diversity , Gemini reads and synthesizes the actual content of reviews, not just star ratings. Businesses whose reviews describe specific experiences, ambiance details, and use-case scenarios will surface more frequently for conversational queries. Second, photo quality and variety , Ask Maps uses uploaded photos to validate and enrich its understanding of a business. Interior shots, product photos, and atmosphere images directly feed the AI's ability to match businesses to subtle requests. Third, structured business attributes , every attribute in your Google Business Profile (amenities, accessibility features, payment methods, hours variations) becomes a potential matching criterion for natural language queries.

Practitioner Insight

Encourage customers to write detailed, descriptive reviews that mention specific experiences rather than generic praise. "Great coffee" helps less than "quiet spot with fast Wi-Fi and plenty of outlets for working." Ask Maps rewards specificity because Gemini matches specific review language to specific user queries.

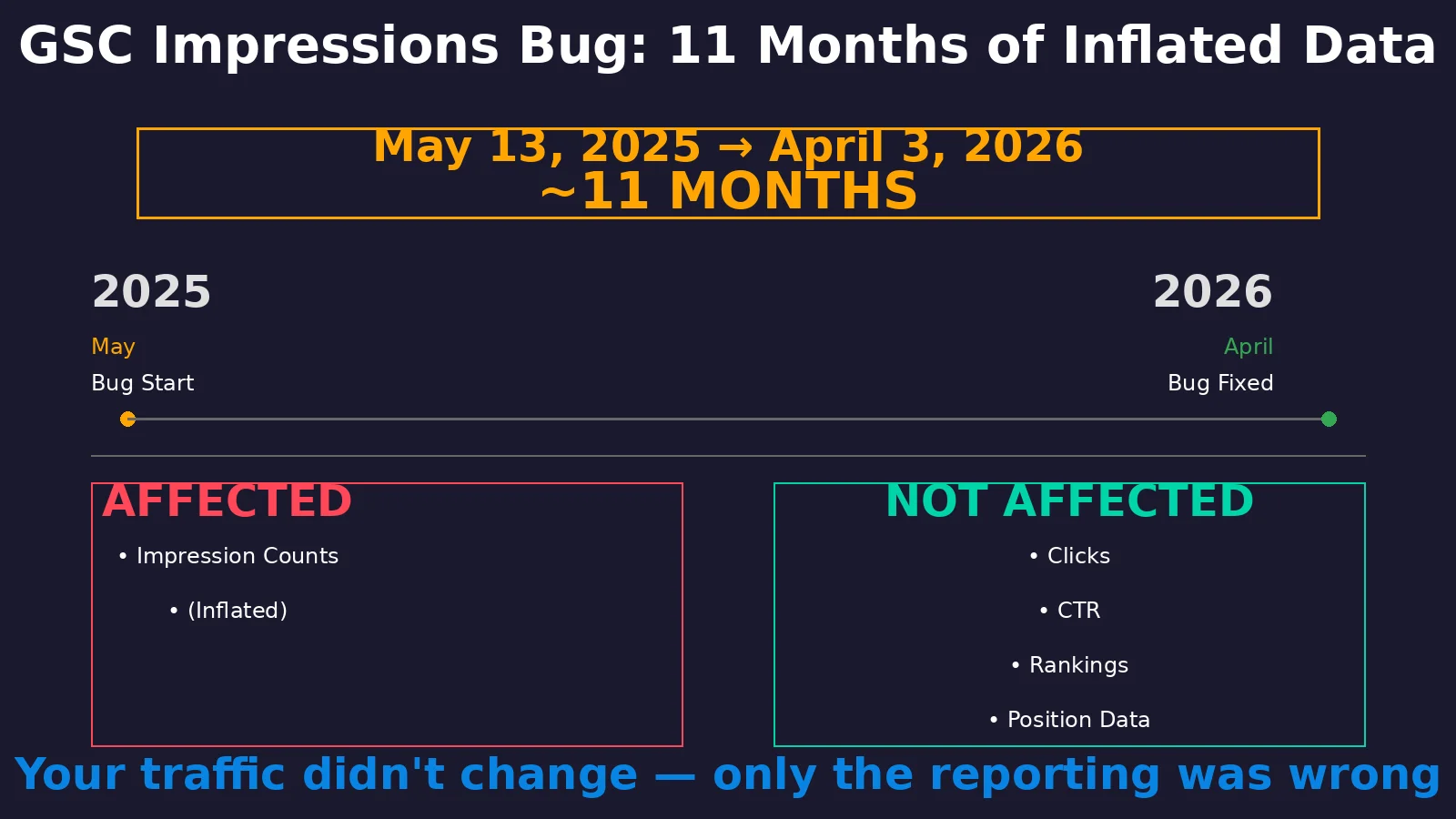

3. The 11-Month GSC Impressions Bug , What Your Data Really Shows

On April 3, 2026, Google officially confirmed what some observant practitioners had suspected: a logging error in Google Search Console had been inflating impression counts for nearly eleven months, dating back to May 13, 2025. The bug was not a minor discrepancy , it systematically over-reported impressions across the entire Performance report.Data Integrity Alert: If you used GSC impression data between May 2025 and April 2026 for reporting, forecasting, or strategic decisions, those figures were inflated. Clicks, CTR, average position, and ranking data were NOT affected , only raw impression counts.

The Timeline

| Date | Event |

|---|---|

| May 13, 2025 | Logging error begins , impressions start being over-reported |

| May 2025 – March 2026 | ~11 months of inflated impression data in GSC Performance reports |

| April 3, 2026 | Google officially confirms the bug and announces fix rollout |

| April 2026 (ongoing) | Corrections rolling out , expect visible impression drops over coming weeks |

What to Do Now

First, audit any reports or dashboards that reference GSC impression data from the affected period. Flag these figures as potentially inflated and add contextual notes for stakeholders. Second, do not panic if you see impression counts drop significantly in the coming weeks , this is the correction, not a ranking decline. Third, use this as an opportunity to diversify your measurement stack. Relying solely on GSC for search visibility metrics creates a single point of failure. Third-party tools like Ahrefs, Semrush, or Sistrix provide independent visibility tracking that can serve as a cross-reference when platform-specific bugs occur.

Practitioner Insight

The silver lining: your actual CTR is likely higher than your reports showed during the affected period, since clicks were accurate but impressions were inflated. When the corrections complete, you may see a CTR improvement in reports , not because performance changed, but because the denominator is finally correct.

4. Googlebot's 2MB Hard Ceiling: Page Weight in 2026

Google published a detailed technical blog post on March 31, 2026, accompanied by a Search Off the Record podcast episode in which Martin Splitt and Gary Illyes provided the most explicit documentation yet of Googlebot's crawling architecture and byte limits. The key revelation: Googlebot fetches a maximum of 2MB per individual URL (excluding PDFs), and everything beyond that threshold is completely ignored.Why This Matters More Than You

Think The average mobile homepage has grown from 845KB in 2015 to 2.3MB in 2025, according to the Web Almanac. That means the average mobile page now exceeds Googlebot's fetch limit. While the 2MB ceiling applies per file rather than per page , so individual CSS, JavaScript, and image files each have their own limit , pages with large inline content, excessive boilerplate HTML, or bloated JavaScript bundles may have critical content truncated during indexing. The per-file distinction is important and has been widely misunderstood. Some site owners believed the 2MB limit applied to the entire page including all resources. It does not. Each resource file (HTML, CSS, JS, images) has its own 2MB ceiling. For the vast majority of websites, this is not a practical concern for individual resource files. The risk zone is large single HTML documents , especially those with extensive inline JavaScript, embedded data, or dynamically generated content blocks that push the raw HTML beyond the limit. The podcast also confirmed that Googlebot now uses IP addresses associated with Google Cloud rather than exclusively the legacy Googlebot IP ranges. This may affect server-side bot detection rules, WAF configurations, and CDN settings that whitelist Googlebot based on IP address. ### Technical Audit Checklist Check your largest pages using Chrome DevTools Network tab, filtering by document type, and verify the HTML response size stays well under 2MB. Pay special attention to pages with server-side rendered content, data tables, product listing pages with inline JSON-LD for many items, and single-page application shells that embed large configuration objects. If any individual HTML document approaches 1.5MB, consider splitting content across multiple pages or lazy-loading below-the-fold sections. The full

Google Search Central technical post covers the per-file specifics. For engagements where crawl budget sits alongside Core Web Vitals and schema, a technical SEO advisory is the fastest path to surface risk.Action Required

Verify that your WAF and CDN configurations are not inadvertently blocking legitimate Googlebot requests from the newer Google Cloud IP ranges. Test with Google's Rich Results Test or URL Inspection tool to confirm Googlebot can still access your pages without interference.

5. Universal Commerce Protocol: Google's Agentic Checkout Play

Google has published a self-service onboarding guide for its Universal Commerce Protocol (UCP) in Merchant Center, marking a significant step toward what Google envisions as the future of online commerce: AI agents completing purchases on behalf of users directly within Google surfaces like AI Mode and Gemini. UCP is an open standard , not a proprietary Google format , designed to enable agentic commerce. In practical terms, this means a user could tell Gemini "order me running shoes under $120 with next-day delivery," and the AI agent would handle product discovery, selection, checkout, and payment through UCP-enabled merchant integrations, without the user ever visiting a traditional product page.What the Onboarding Guide Covers

The guide walks merchants through three critical integration steps. First, UCP profile configuration within Merchant Center, including mapping your product catalog and setting up checkout endpoints. Second, identity linking, which connects your merchant identity across Google surfaces to enable smooth transaction attribution. Third, checkout API implementation, which involves configuring your backend to handle UCP-initiated transactions including inventory validation, payment processing, and order confirmation. The system supports both REST API and MCP (Model Context Protocol) bindings, and Google provides a sandbox environment for testing before going live. The rollout is currently limited to the United States, with a dedicated UCP integration tab expected to appear in Merchant Center accounts over the coming months. The Merchant API is also coming to Google Ads scripts starting April 22, 2026, enabling programmatic management of commerce data at scale.

Practitioner Insight

UCP represents a potentially existential shift for traditional e-commerce SEO. If users can purchase directly through AI surfaces without visiting product pages, organic product page traffic could decline for participating merchants. The counterbalance: merchants who integrate with UCP gain access to a new conversion channel that competitors may not have. Early adoption matters , product structured data (schema.org Product, Offer, Review markup) and Merchant Center feed quality directly impact whether your products surface in agentic commerce interactions.

6. Mueller on Outbound Links: Ignored, Not Penalized

On April 9, 2026, Google's John Mueller provided clarity on a question that has generated persistent anxiety in the SEO community: what happens when a site's outbound links point to low-quality or spammy destinations? Mueller's statement was unambiguous. If Google's systems recognize that a site links outward in ways that are not helpful or aligned with Google's policies, Google may simply ignore all outbound links from that site. The key word is "ignore" , not penalize, not devalue the linking site, and not transfer negative signals to the destination sites.What This Means in Practice

The distinction between "ignored" and "penalized" is technically significant. When Google ignores outbound links from a site, those links cease to pass PageRank or any link equity in either direction. The linking site itself is not penalized for having those links , they are simply removed from Google's link graph calculations. The receiving sites are also not harmed by being linked from a spammy source. This aligns with Google's longstanding position on negative SEO concerns: Google's algorithms have become sophisticated enough to identify and neutralize spammy link signals without requiring manual intervention from webmasters in most cases. Mueller's statement extends this logic to outbound links , Google can identify when a site's outbound linking pattern is unreliable and stop factoring those links into ranking calculations.

Practitioner Insight

This does not mean outbound link quality is irrelevant. While Google may not penalize you for linking to low-quality destinations, your outbound links still affect user experience and perceived credibility. More, if your site's outbound links get flagged as unhelpful, ALL of your outbound links may be ignored , including ones pointing to legitimate resources. Maintain a clean outbound link profile not to avoid penalties, but to ensure your editorial links retain their value in Google's link graph.

7. Frequently Asked Questions

What changed in the Google March 2026 core update?

The March 2026 core update amplified Information Gain scoring and E-E-A-T signals more aggressively than any previous update. Sites with original research saw 15–25% visibility gains, while thin content and affiliate comparison pages dropped 30–50%. Over 55% of monitored sites experienced ranking shifts during the 12-day rollout from March 27 to April 8, 2026. The SEMrush Sensor volatility score hit 9.5 out of 10 at peak.

What is Google Ask Maps and how does it affect local SEO?

Ask Maps is a Gemini AI-powered feature in Google Maps that lets users ask natural language questions instead of keyword searches. It analyzes 300+ million places and 500+ million user reviews to provide conversational, personalized recommendations. For local SEO, review depth and sentiment diversity, photo quality and variety, and structured business attributes all become significantly more important than before.

How long did the Google Search Console impressions bug last?

The GSC impressions logging error ran for approximately 11 months, from May 13, 2025 through early April 2026. Only impression counts were inflated , clicks, CTR, rankings, and position data were not affected. Google confirmed the fix on April 3, 2026 and corrections are rolling out over several weeks. CTR calculations using impression data from this period are unreliable.

What is Googlebot's crawling size limit per page?

Googlebot fetches up to 2MB per individual URL (excluding PDFs, which have a 15MB limit), including HTTP headers. Content beyond 2MB is completely ignored , not fetched, not rendered, not indexed., this limit applies per file, not per page. Each resource file (HTML, CSS, JS) has its own 2MB ceiling. The average mobile homepage has grown to 2.3MB in 2025, making this limit increasingly relevant.

What is Google's Universal Commerce Protocol (UCP)?

UCP is an open standard enabling direct purchasing within Google AI surfaces like AI Mode and Gemini. It allows AI agents to complete checkout on behalf of users without visiting traditional product pages. Google published a self-service onboarding guide in Merchant Center covering UCP profile configuration, identity linking, and checkout API implementation. Currently rolling out in the U.S. with gradual expansion planned.

Does Google penalize sites for outbound links to spam sites?

No. According to John Mueller (April 9, 2026), Google does not treat outbound links as carriers of negative signals. Instead, if a site's outbound linking pattern is misaligned with Google's policies, Google may ignore all outbound links from that site entirely. The links are not penalized , they are simply excluded from Google's link graph calculations, meaning they pass no value in either direction.

Watch the Full Video Breakdown

Extended 5-minute analysis of this week's biggest SEO developments , the March 2026 core update aftermath, Ask Maps, the 11-month GSC bug, and the one-week execution plan.