SEO Pulse April 2026: Core Update Aftermath, the GSC Impressions Bug, and Why LLM Bots Now Out-Crawl Googlebot

Deep analysis of Google's March 2026 core update, the 10-month Search Console impressions bug, LLM bot crawl dominance, and how AI Overviews are reshaping organic CTR. Actionable recovery strategies for every SEO team.

This has been one of the most consequential weeks in SEO since the helpful content update era. Between a completed core update, a nearly year-long data integrity issue in Search Console, and fresh evidence that AI bots are fundamentally reshaping crawl economics, search marketers have a lot to process — and even more to act on.

I went deeper than the headlines to bring you granular analysis, verified timelines, and specific tactical frameworks you can implement starting today. Here's what matters most.

1. March 2026 Core Update: Complete Rollout Analysis and Recovery Roadmap

Google's first core update of 2026 officially completed on April 8, wrapping up a 12-day rollout that began on March 27. But the real story isn't the update itself — it's the unprecedented sequence of algorithmic changes that preceded it and how they compound to reshape the SERP landscape.

The Full Update Sequence Matters

Most coverage focuses on the core update in isolation. That's a mistake. Google deployed three distinct algorithmic changes within a 14-day window, and understanding the sequence is critical for accurate performance attribution.

Discover Update — Google adjusted how content surfaces in Google Discover feeds, affecting traffic patterns for publishers heavily reliant on Discover.

Spam Update — Completed in under 20 hours, making it the shortest confirmed spam update in Search Status Dashboard history. This targeted link spam, cloaking, and manipulative redirect patterns.

Core Update — The main event. Described by Google as "a regular update designed to better surface relevant, satisfying content for searchers from all types of sites." Total rollout: 12 days.

Why this matters: If your traffic dropped between March 24 and April 8, you could be dealing with spam penalties, core quality reassessment, or both. Diagnosing the wrong cause leads to the wrong recovery strategy.

Which Industries and Site Types Were Hit Hardest?

The March 2026 core update applied heightened scrutiny to YMYL (Your Money or Your Life) content categories. Websites in health, finance, legal, and home services verticals experienced the most significant volatility because Google holds this content to the highest E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) standards.

But the deeper pattern goes beyond industry verticals. According to cross-source analysis, the update heavily impacted three specific site archetypes that most coverage has missed:

The Three Site Types Hit Hardest

- Breadth-over-depth publishers. Sites that expanded content production into loosely related topics during the last few years struggled significantly. Google's systems now penalize topical sprawl without demonstrated expertise — publishing 500 articles across 50 topics signals a content farm, not authority.

- Local businesses that went too generic. Local sites that became too broad, detaching from their actual services and geographic focus, lost visibility. A plumber in Denver blogging about general home renovation tips nationwide is the classic example.

- E-commerce with thin category infrastructure. The update exposed vulnerabilities in sites relying on thin category copy, duplicated manufacturer text, and weak filtering experiences. Product pages with no original descriptions and category pages with boilerplate content were particularly affected.

Sites that demonstrated strong first-hand experience signals — author bylines linked to verifiable credentials, original case studies, cited primary data — generally climbed. Sites relying on aggregated, surface-level content without clear expertise signals lost ground.

The Recovery Roadmap

Google's own guidance is to wait at least one full week after completion (meaning after April 15) before drawing conclusions from the data. Your baseline period should be the weeks before March 27, compared against performance after April 8. Here's what to do in the meantime:

Core Update Recovery Checklist

- Audit your E-E-A-T signals page by page. Do your top-traffic pages have author bios with verifiable credentials? Are you citing primary sources or just summarizing other summaries?

- Segment your data by update window. Compare March 24–25 (spam update) separately from March 27 – April 8 (core update). Different drops require different fixes.

- Check for compounding penalties. If both the spam and core updates affected you, address the spam issues first — link cleanup, redirect audits, cloaking checks — before tackling content quality.

- Add original research and first-hand experience. Google's systems increasingly reward content that offers something new: original data, expert interviews, practical case studies.

- Review YMYL content with extra scrutiny. Health, finance, and legal pages need demonstrable expertise. Consider adding expert review badges, citing medical or legal professionals, and linking to authoritative sources.

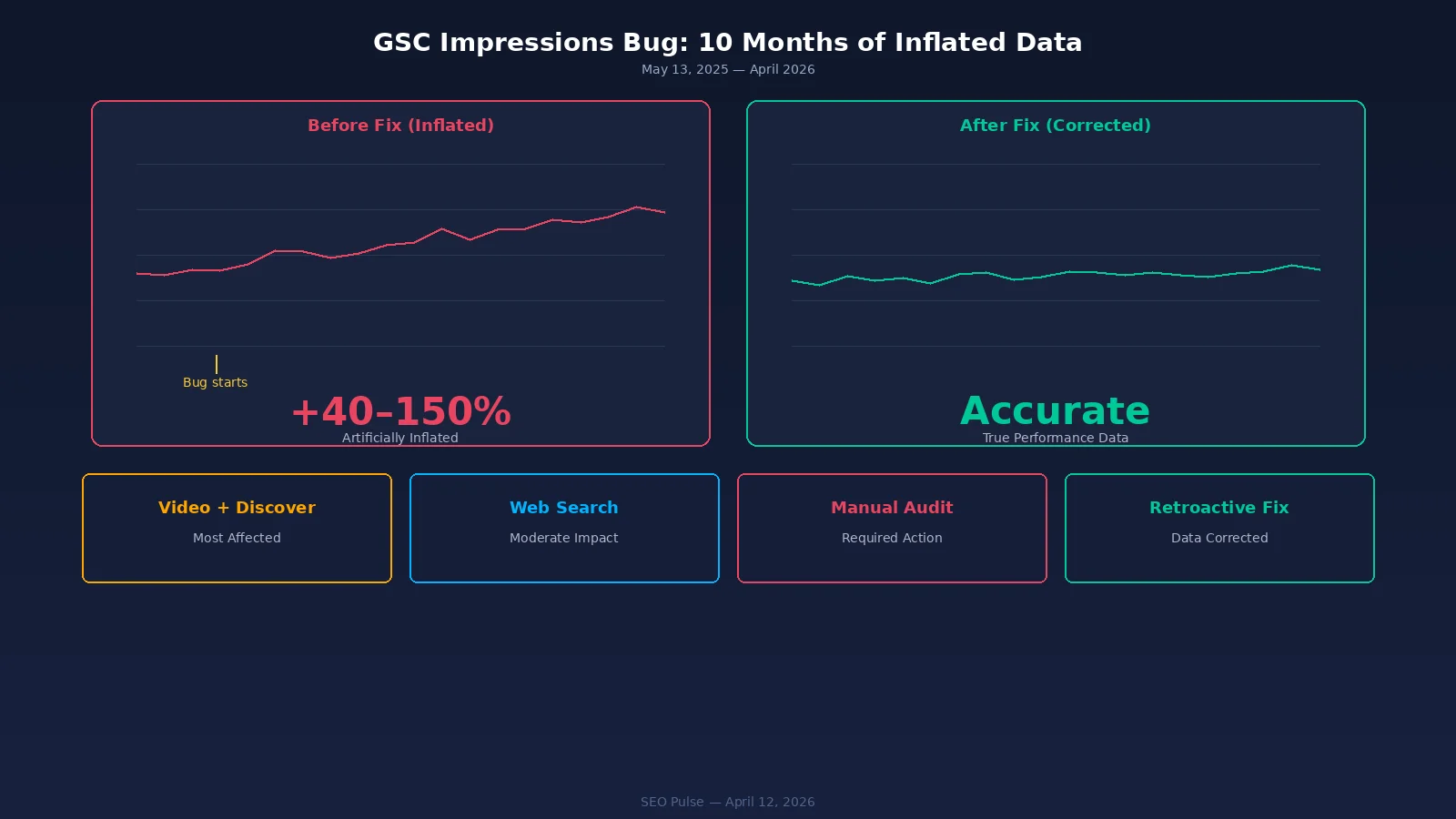

2. The 10-Month GSC Impressions Bug: What Your Data Actually Looked Like

On April 3, Google officially acknowledged what many SEOs had suspected: a logging error had been over-reporting impressions in Search Console since May 13, 2025. That's roughly ten and a half months of inflated data that has been feeding into dashboards, client reports, and strategic decisions across the entire industry.

What Was Affected — and What Wasn't

Google confirmed that clicks and other direct metrics were unaffected. The error was isolated to impression logging. However, this distinction is less reassuring than it sounds, because any metric derived from impressions was corrupted. That includes:

| Metric | Directly Affected? | Impact |

|---|---|---|

| Impressions | Yes — over-reported | Raw impression counts inflated since May 2025 |

| Clicks | No | Click data remained accurate throughout |

| CTR (Click-Through Rate) | Yes — artificially deflated | Higher impression denominator made CTR appear lower than reality |

| Average Position | Potentially skewed | Additional logged impressions may have altered position averages |

| Merchant Listings / Google Images | Yes — particularly affected | eCommerce sites relying on these filters saw the most distortion |

The Ripple Effect on Strategic Decisions

Think about what 10.5 months of deflated CTR data means in practice. Teams that observed declining CTR may have launched optimization campaigns for problems that didn't exist. Content that appeared to be underperforming on CTR may have actually been performing well. A/B test results for title tags and meta descriptions conducted during this period may need to be re-evaluated.

Immediate action: Flag all GSC-derived reports and dashboards covering May 2025 through present as potentially containing inflated impression data. Google says corrections will roll out "over the coming weeks," and you'll see impressions decrease as the fix propagates.

GSC Bug Remediation Steps

- Re-baseline your impression data. Once Google's corrections are fully rolled out, establish new baselines using the corrected data. Don't compare corrected data against uncorrected historical data.

- Re-evaluate CTR optimization decisions. If you changed title tags or meta descriptions based on low CTR during the affected period, those changes may have been unnecessary.

- Audit client reporting. If you've been reporting impression growth to clients or stakeholders, prepare communication about the data correction and what it means for previously reported numbers.

- Cross-reference with third-party tools. Compare your GSC impression trends against rank tracking tools and analytics platforms that measure traffic independently.

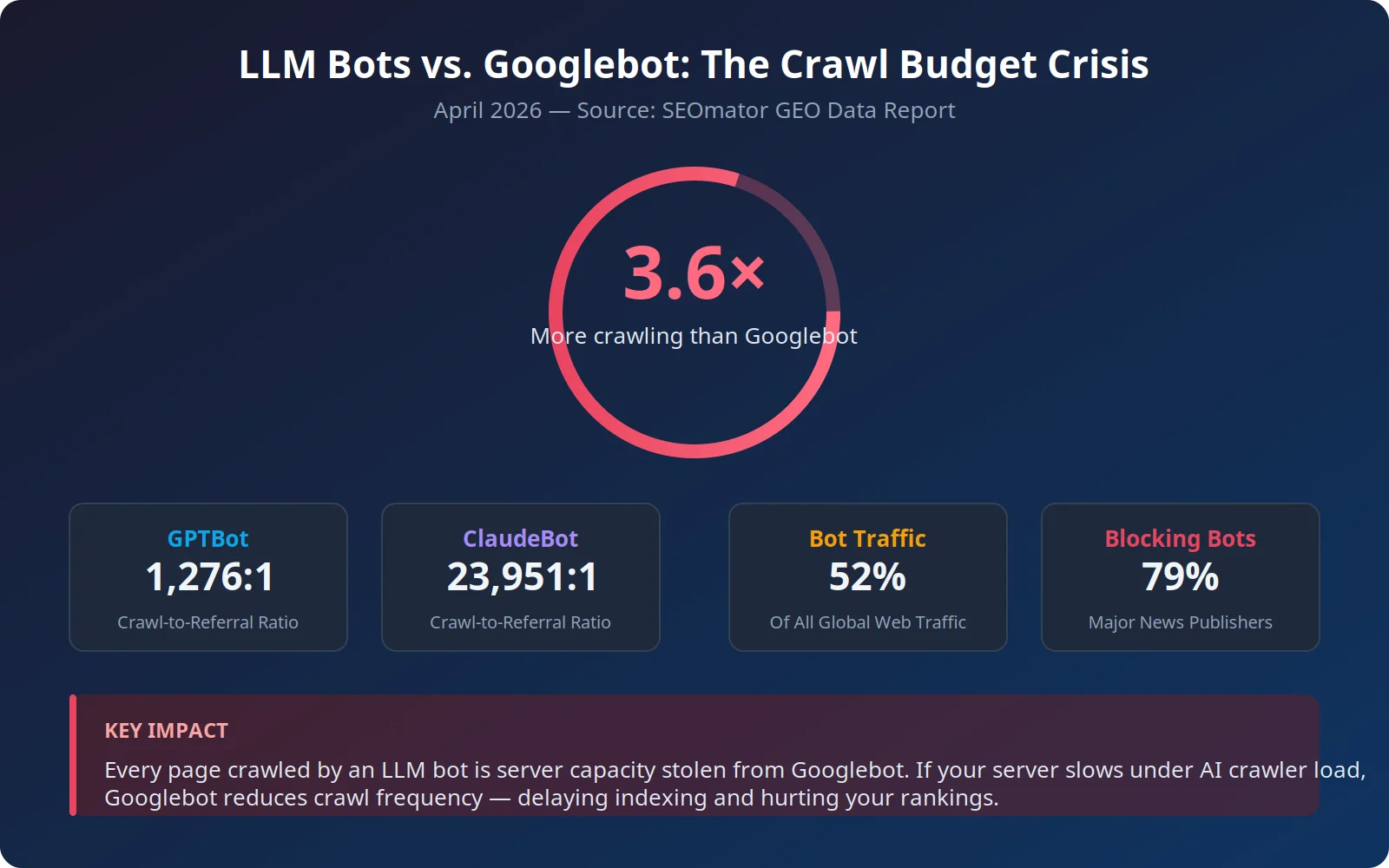

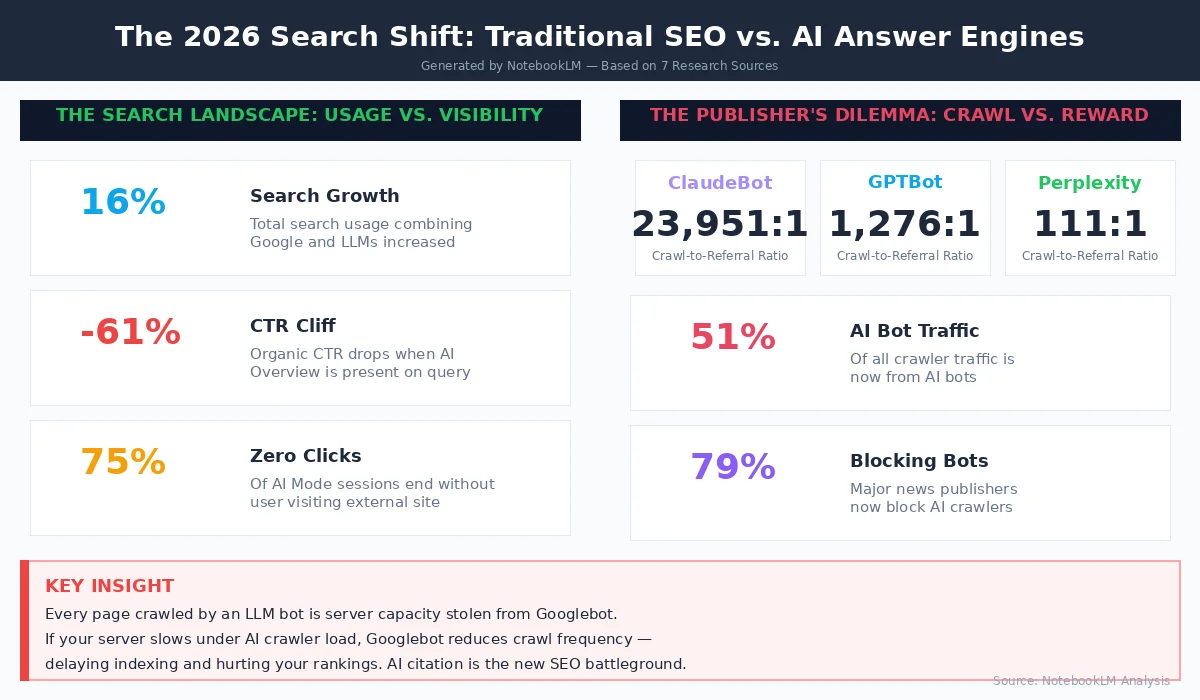

3. LLM Bots Now Crawl 3.6× More Than Googlebot — and That's a Problem

Here's the number that should be on every technical SEO's radar: LLM bots — including ChatGPT-User, GPTBot, ClaudeBot, Amazonbot, Applebot, Bytespider, PerplexityBot, and CCBot — now crawl 3.6 times more frequently than Googlebot. Bots overall account for 52% of all global web traffic, outnumbering human visitors roughly three to one.

The Crawl Budget Crisis

Every page crawled by an LLM bot is server capacity that could have served Googlebot. For enterprise sites, the situation is already critical: AI crawlers now consume up to 40% of total crawl activity. Collectively, LLM bots account for 51.69% of all crawler traffic, surpassing traditional search engines (Googlebot + Bingbot + YandexBot), which sit at just 34.46%.

When AI crawlers generate excessive load, servers respond more slowly, and Googlebot may reduce its crawl frequency as a result. This creates a cascading effect: slower indexing of new content, delayed search result updates, and degraded overall SEO performance — all caused by bots that send virtually zero referral traffic back to your site.

The crawl-to-referral ratios tell the full story. ClaudeBot crawls 23,951 pages for every single referral visit it generates. GPTBot's ratio is 1,276 to 1. And the worst offender? Meta-ExternalAgent, which accounts for 36.10% of all AI crawler traffic but offers absolutely zero referral mechanism — it's pure extraction with nothing in return.

Industry-Level Crawl Impact: Who's Subsidizing AI Training?

The crawl burden isn't distributed evenly across industries. Retail sites absorb 20.56% of all AI crawler traffic but suffer the worst crawl-to-refer ratios, effectively subsidizing LLM model training with their product data and infrastructure costs. Finance sites, conversely, receive the best AI referral rates — Perplexity returns 1 referral for every 42 pages crawled on financial content, a dramatically better ratio than any other vertical.

Only DuckDuckGo achieves near-parity at 1.5:1 crawl-to-refer, while Meta and OpenAI alone account for over 70% of all AI crawler traffic. This concentration means your bot management strategy really comes down to handling just two or three major players.

The JavaScript Rendering Gap

There's an additional technical wrinkle: none of the major AI bots can currently render JavaScript. According to a Vercel study, OpenAI's, Anthropic's, Meta's, ByteDance's, and Perplexity's crawlers all fail to execute client-side JavaScript. This means they're crawling your raw HTML and missing any content rendered dynamically — while still consuming your server resources.

LLM Bot Defense Strategy

- Audit your server logs. Identify which LLM bots are crawling your site, how frequently, and which pages they're hitting hardest. Most sites will be surprised by the volume.

- Implement selective robots.txt rules. Block LLM training bots (GPTBot, CCBot, Bytespider) that provide zero referral value while allowing AI search bots that might cite your content.

- Consider rate limiting. Use server-level rate limiting to cap LLM bot requests per second without outright blocking them, preserving the possibility of AI citation while protecting performance.

- Monitor crawl budget impact. Compare Googlebot crawl frequency before and after implementing LLM bot restrictions. You may see Googlebot's crawl rate increase as server capacity frees up.

- Adopt the llms.txt standard. This emerging protocol lets you specify which content LLM bots should prioritize, giving you more control over how your content is consumed by AI systems.

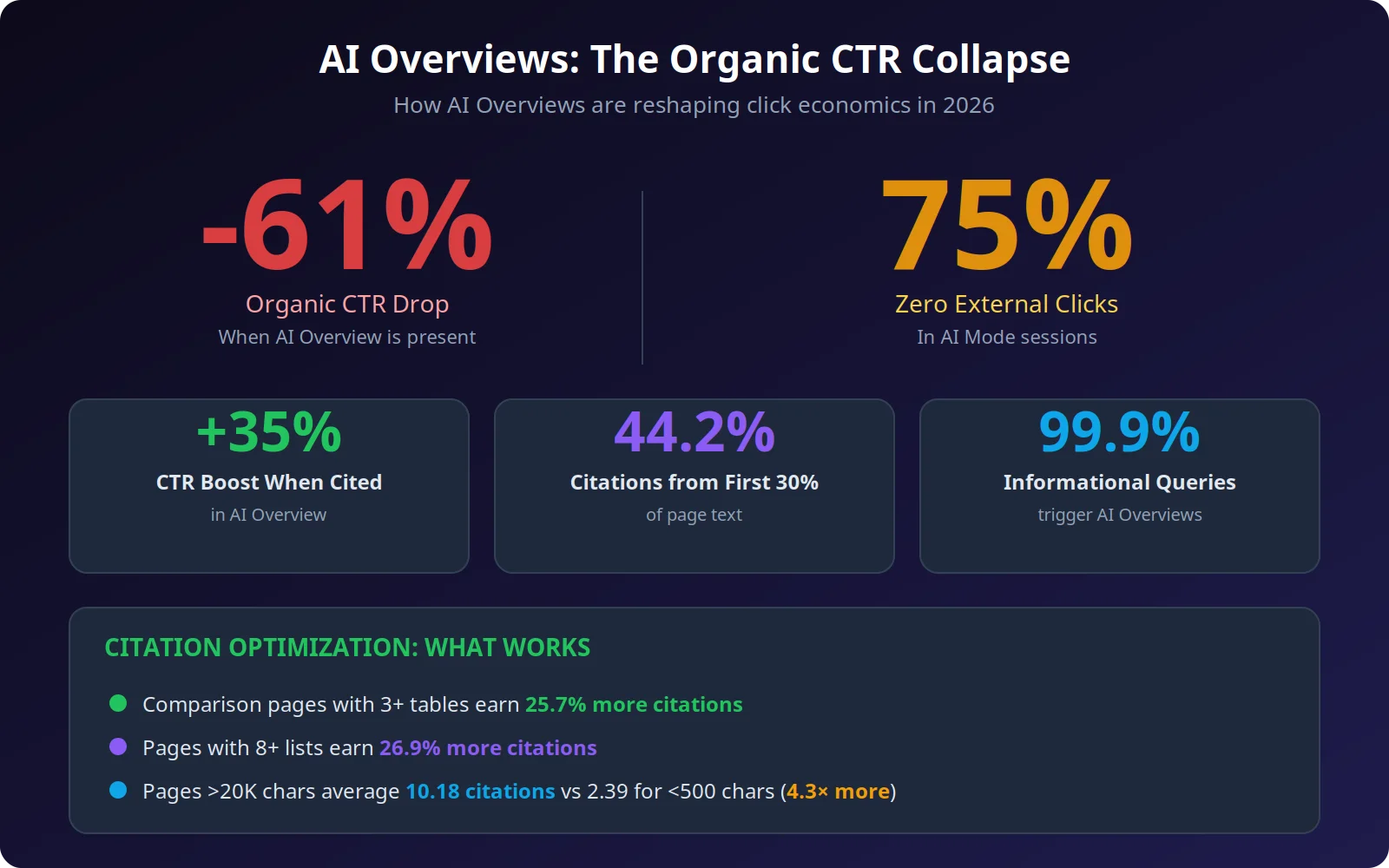

4. AI Overviews Are Crushing Organic CTR by 61%: The Survival Playbook

The data is now unambiguous: AI Overviews represent the most significant disruption to organic search traffic since the introduction of featured snippets, and the scale of impact dwarfs anything we've seen before.

Let those numbers sink in. When an AI Overview appears, organic click-through rates drop by 61%. In 75% of AI Mode sessions, users never click a single external link. And 99.9% of informational queries now trigger an AI Overview, meaning the vast majority of knowledge-seeking searches are now mediated by Google's AI layer.

The Citation Economy

There is a silver lining, and it's significant: when your brand appears as a citation within an AI Overview, your organic CTR actually increases by 35%. The challenge has shifted from ranking to earning citations, and the two require different optimization strategies.

Research shows that 44.2% of all LLM citations come from the first 30% of a page's text. This means your introductory content has disproportionate influence on whether AI systems cite you. Pages with comparison tables (three or more) earn 25.7% more citations, while validation pages with eight or more lists earn 26.9% more. Longer content also wins: pages exceeding 20,000 characters average 10.18 ChatGPT citations versus just 2.39 for pages under 500 characters.

The Factual Density Advantage

Here's the data point that should reshape your content strategy: a typical AI Overview-cited article covers 62% more facts than non-cited alternatives, and core sources cover 42% of key facts for their topic. In other words, AI systems aren't just looking for relevant content — they're looking for the most informationally dense version of it. Thin, surface-level articles won't cut it even if they rank well traditionally.

What Content Formats Win by Query Type

The format that earns the most AI citations varies dramatically by query type. Across all LLMs, listicles are the most commonly cited format at 21.9%, rising to 40.86% for commercial queries and 43.8% specifically in ChatGPT's responses. But for informational queries, articles dominate at 45.48% — a critical distinction for content planning.

Perhaps the most actionable insight: pages that use 120 to 180 words between headings receive 70% more ChatGPT citations compared to pages with sections under 50 words. This suggests an optimal "chunk size" for AI-readable content — detailed enough to provide standalone value, but structured enough for easy extraction.

Key Takeaway: Optimize for Citation, Not Just Ranking

The SEO game has split into two parallel tracks. Track one is traditional ranking optimization for the 0.1% of informational queries and all transactional/navigational queries that don't trigger AI Overviews. Track two is citation optimization — structuring your content so AI systems reference and link to you. The brands winning in 2026 are playing both tracks simultaneously.

How to Earn AI Overview Citations

- Front-load your expertise. Put your most authoritative, data-rich content in the first 30% of the page. AI systems disproportionately cite introductory content.

- Use structured comparison formats. Pages with three or more comparison tables earn 25.7% more AI citations. Structure your content with clear, data-rich comparison tables.

- Publish original research and data. AI systems preferentially cite primary sources over derivative content. Original surveys, studies, and datasets are citation magnets.

- Implement comprehensive schema markup. Structured data helps AI systems understand and extract your content accurately. Focus on Article, FAQ, HowTo, and Product schema.

- Build brand authority signals. AI systems trust established brands more. Consistent publication cadence, expert author bios, and earned backlinks from authoritative domains all contribute.

- Write longer, more comprehensive content. Pages exceeding 20,000 characters earn 4.3 times more citations than short-form content. Depth beats brevity in the citation economy.

5. New GSC Weekly & Monthly Views: How to Actually Use Them

While less dramatic than the other stories this week, Google's addition of weekly and monthly aggregation views to Search Console performance reports is a genuinely useful feature that addresses a long-standing pain point for SEO practitioners.

Previously, Search Console only displayed daily data, which made it difficult to identify meaningful trends without manual data aggregation. Daily fluctuations — weekday/weekend patterns, one-off spikes from news events, crawl anomalies — created noise that obscured real performance shifts. The new views let you toggle between daily, weekly, and monthly aggregation directly in the interface.

Practical Applications

The weekly view is ideal for evaluating the impact of specific changes: a new piece of content published, a technical fix deployed, or an algorithm update rolling out. Instead of trying to eyeball trends across jagged daily data, you get clean week-over-week comparisons.

The monthly view serves a different purpose: stakeholder reporting and long-term trend analysis. It provides the kind of clean, directional data that makes sense in executive dashboards and quarterly reviews without requiring you to export data to a spreadsheet for manual aggregation.

Timing note: Given the GSC impressions bug discussed above, the monthly view is especially useful right now. Once Google's impression corrections are fully deployed, monthly aggregation will help smooth out the transition between corrupted and corrected data, making trend identification cleaner during this messy period.

6. Your Week-by-Week Action Plan

Here's how to prioritize everything discussed in this article over the next four weeks:

Diagnose and audit. Flag all GSC reports covering May 2025-present as containing potentially inflated impressions. Audit server logs for LLM bot crawl volume. Begin segmenting your traffic data by the March 24–25 spam update window and the March 27 – April 8 core update window.

Implement bot management. Deploy selective robots.txt rules and rate limiting for LLM bots. Begin E-E-A-T audit of top-traffic YMYL pages. Re-evaluate any CTR optimization decisions made between May 2025 and now.

Optimize for AI citations. Restructure your highest-value informational pages to front-load expertise and add comparison tables. Implement or update schema markup across key content. Begin publishing original research or data-driven content.

Measure and iterate. Compare post-correction GSC data against pre-bug baselines. Evaluate Googlebot crawl frequency changes after LLM bot restrictions. Assess whether core update recovery strategies are showing early signals.

7. Frequently Asked Questions

When did the March 2026 core update start and finish?

The March 2026 core update started on March 27, 2026 at 2:00 AM PT and completed on April 8, 2026, for a total rollout of 12 days. It was preceded by a spam update on March 24–25 and a Discover update in February 2026.

How long should I wait before analyzing my core update data?

Google recommends waiting at least one full week after the update completed (after April 15, 2026) before drawing conclusions. Your baseline comparison period should be the weeks before March 27, measured against performance after April 8.

Were my Search Console clicks affected by the impressions bug?

No. Google confirmed that clicks and other direct metrics were not affected. Only impression counts were over-reported, which in turn artificially deflated your CTR calculations. The bug ran from May 13, 2025 through the fix rollout beginning April 3, 2026.

Should I block all LLM bots in robots.txt?

Not necessarily. A blanket block prevents AI systems from citing your content, which can provide a 35% CTR boost when it happens. Instead, consider selectively blocking training bots (GPTBot, CCBot, Bytespider) while allowing AI search bots that may drive citations and referral traffic.

How can I get my content cited in AI Overviews?

Focus on front-loading expert content in the first 30% of your pages, using structured comparison tables, publishing original research, implementing comprehensive schema markup, and building brand authority. Pages over 20,000 characters earn 4.3 times more AI citations than short-form content.

What is the llms.txt standard?

Similar to robots.txt for search engines, llms.txt is an emerging protocol that lets you specify which content LLM bots should prioritize when crawling your site. It gives publishers more control over how AI systems consume and cite their content.